Mind transfers, nanotech, and robotic innovations take center stage in this visionary 2026 book.

Category: information science – Page 11

New model frames human reinforcement learning in the context of memory and habits

Humans and most other animals are known to be strongly driven by expected rewards or adverse consequences. The process of acquiring new skills or adjusting behaviors in response to positive outcomes is known as reinforcement learning (RL).

RL has been widely studied over the past decades and has even been adapted to train some computational models, such as some deep learning algorithms. Existing models of RL suggest that this type of learning is linked to dopaminergic pathways (i.e., neural pathways that respond to differences between expected and experienced outcomes).

Anne G. E. Collins, a researcher at University of California, Berkeley, recently developed a new model of RL specific to situations in which people’s choices have uncertain context-dependent outcomes, and they try to learn the actions that will lead to rewards. Her paper, published in Nature Human Behaviour, challenges the assumption that existing RL algorithms faithfully mirror psychological and neural mechanisms.

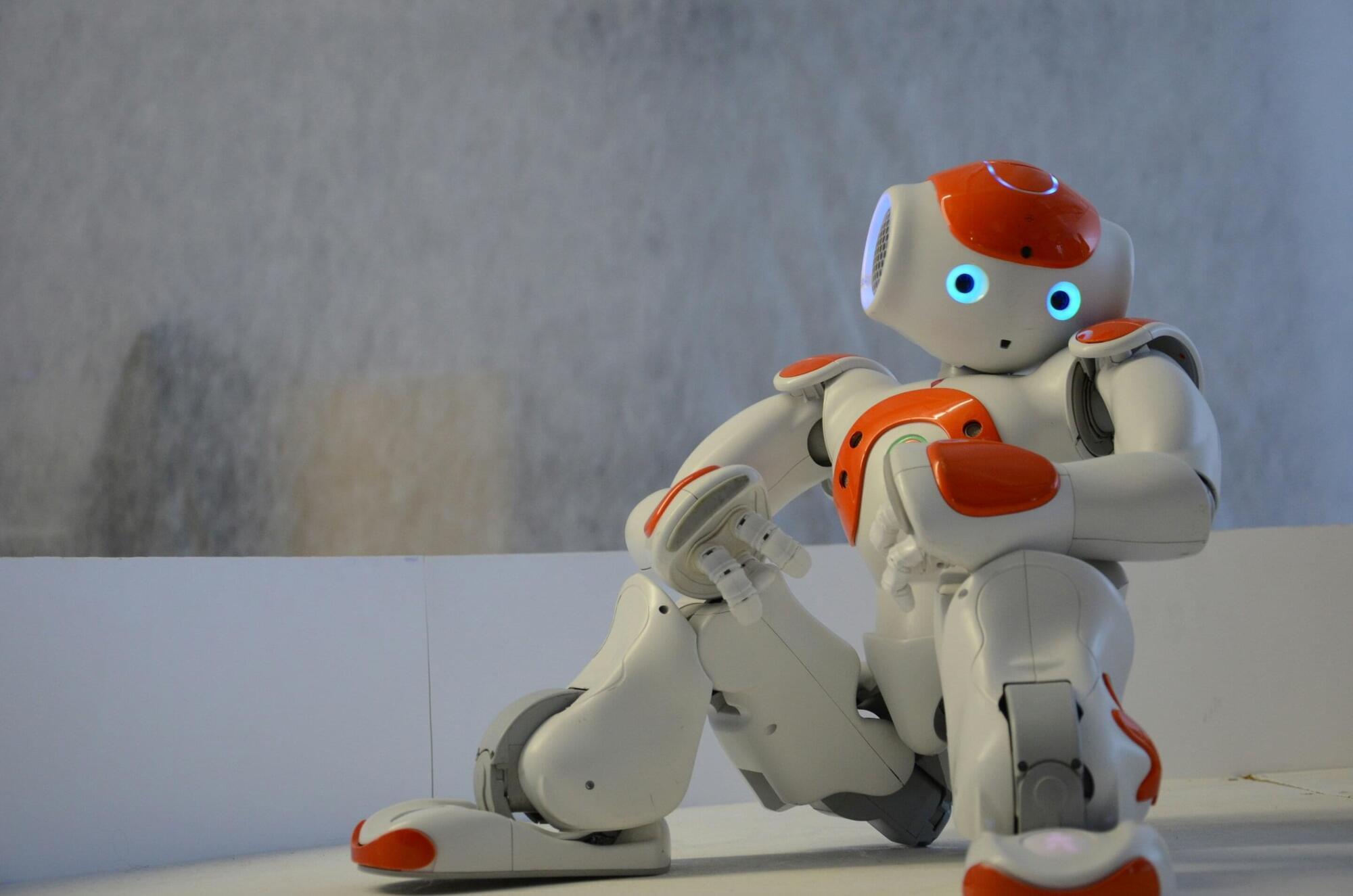

Infant-inspired framework helps robots learn to interact with objects

Over the past decades, roboticists have introduced a wide range of advanced systems that can move around in their surroundings and complete various tasks. Most of these robots can effectively collect images and other data in their surroundings, using computer vision algorithms to interpret it and plan their future actions.

In addition, many robots leverage large language models (LLMs) or other natural language processing (NLP) models to interpret instructions, make sense of what users are saying and answer them in specific languages. Despite their ability to both make sense of their surroundings and communicate with users, most robotic systems still struggle when tackling tasks that require them to touch, grasp and manipulate objects, or come in physical contact with people.

Researchers at Tongji University and State Key Laboratory of Intelligent Autonomous Systems recently developed a new framework designed to improve the process via which robots learn to physically interact with their surroundings.

AI Expert: We Have 2 Years Before Everything Changes! We Need To Start Protesting! — Tristan Harris

For example: “If you’re worried about immigration, you should be way more concerned about AI” — for the impact on jobs, cultural stability, and social predictability.

Ex-Google Insider and AI Expert TRISTAN HARRIS reveals how ChatGPT, China, and Elon Musk are racing to build uncontrollable AI, and warns it will blackmail humans, hack democracy, and threaten jobs…by 2027.

Tristan Harris is a former Google design ethicist and leading voice from Netflix’s The Social Dilemma. He is also co-founder of the Center for Humane Technology, where he advises policymakers, tech leaders, and the public on the risks of AI, algorithmic manipulation, and the global race toward AGI.

Please consider sharing this episode widely. Using this link to share the episode will earn you points for every referral, and you’ll unlock prizes as you earn more points: https://doac-perks.com/

He explains:

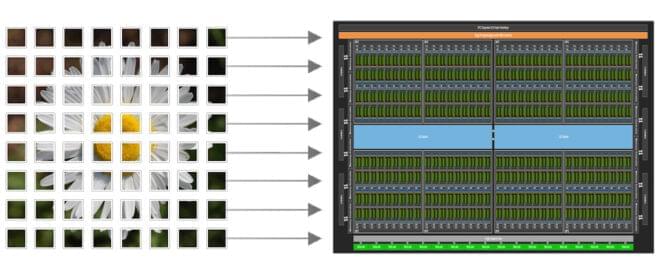

Focus on Your Algorithm—NVIDIA CUDA Tile Handles the Hardware

With its largest advancement since the NVIDIA CUDA platform was invented in 2006, CUDA 13.1 is launching NVIDIA CUDA Tile. This exciting innovation introduces a virtual instruction set for tile-based parallel programming, focusing on the ability to write algorithms at a higher level and abstract away the details of specialized hardware, such as tensor cores.

CUDA exposes a single-instruction, multiple-thread (SIMT) hardware and programming model for developers. This requires (and enables) you to exhibit fine-grained control over how your code is executed with maximum flexibility and specificity. However, it can also require considerable effort to write code that performs well, especially across multiple GPU architectures.

There are many libraries to help developers extract performance, such as NVIDIA CUDA-X and NVIDIA CUTLASS. CUDA Tile introduces a new way to program GPUs at a higher level than SIMT.

Memories and ideas are living organisms | Michael Levin and Lex Fridman

Lex Fridman Podcast full episode: https://www.youtube.com/watch?v=Qp0rCU49lMs.

Thank you for listening ❤ Check out our sponsors: https://lexfridman.com/sponsors/cv9485-sb.

See below for guest bio, links, and to give feedback, submit questions, contact Lex, etc.

*GUEST BIO:*

Michael Levin is a biologist at Tufts University working on novel ways to understand and control complex pattern formation in biological systems.

*CONTACT LEX:*

*Feedback* — give feedback to Lex: https://lexfridman.com/survey.

*AMA* — submit questions, videos or call-in: https://lexfridman.com/ama.

*Hiring* — join our team: https://lexfridman.com/hiring.

*Other* — other ways to get in touch: https://lexfridman.com/contact.

*EPISODE LINKS:*

Michael Levin’s X: https://twitter.com/drmichaellevin.

Michael Levin’s Website: https://drmichaellevin.org.

Michael Levin’s Papers: https://drmichaellevin.org/publications/

- Biological Robots: https://arxiv.org/abs/2207.00880

- Classical Sorting Algorithms: https://arxiv.org/abs/2401.05375

- Aging as a Morphostasis Defect: https://pubmed.ncbi.nlm.nih.gov/38636560/

- TAME: https://arxiv.org/abs/2201.10346

- Synthetic Living Machines: https://www.science.org/doi/10.1126/scirobotics.abf1571

*SPONSORS:*

To support this podcast, check out our sponsors & get discounts:

*Shopify:* Sell stuff online.

Go to https://lexfridman.com/s/shopify-cv9485-sb.

*CodeRabbit:* AI-powered code reviews.

Go to https://lexfridman.com/s/coderabbit-cv9485-sb.

*LMNT:* Zero-sugar electrolyte drink mix.

Go to https://lexfridman.com/s/lmnt-cv9485-sb.

*UPLIFT Desk:* Standing desks and office ergonomics.

Go to https://lexfridman.com/s/uplift_desk-cv9485-sb.

*Miro:* Online collaborative whiteboard platform.

Go to https://lexfridman.com/s/miro-cv9485-sb.

*MasterClass:* Online classes from world-class experts.

Go to https://lexfridman.com/s/masterclass-cv9485-sb.

*PODCAST LINKS:*

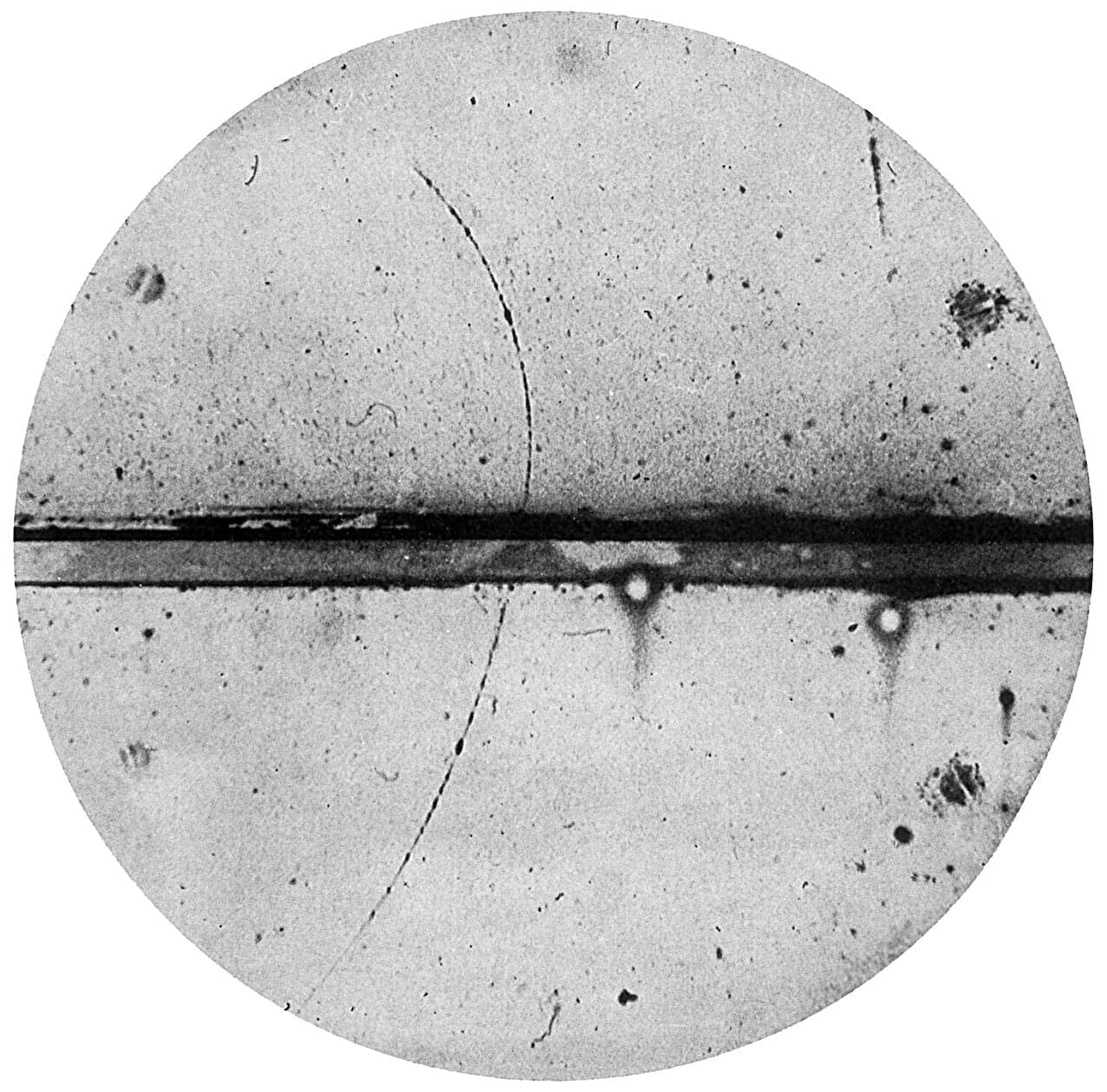

The case for an antimatter Manhattan project

Chemical rockets have taken us to the moon and back, but traveling to the stars demands something more powerful. Space X’s Starship can lift extraordinary masses to orbit and send payloads throughout the solar system using its chemical rockets, but it cannot fly to nearby stars at 30% of light speed and land. For missions beyond our local region of space, we need something fundamentally more energetic than chemical combustion, and physics offers, or, in other words, antimatter.

When antimatter encounters ordinary matter, they annihilate completely, converting mass directly into energy according to Einstein’s equation E=mc². That c² term is approximately 10¹⁷, an almost incomprehensibly large number. This makes antimatter roughly 1,000 times more energetic than nuclear fission, the most powerful energy source currently in practical use.

As a source of energy, antimatter can potentially enable spacecraft to reach nearby stars at significant fractions of the speed of light. A detailed technical analysis by Casey Handmer, CEO of Terraform Industries, outlines how humanity could develop practical antimatter propulsion within existing spaceflight budgets, requiring breakthroughs in three critical areas; production efficiency, reliable storage systems, and engine designs that can safely harness the most energetic fuel physically possible.