Image and video editing are two of the most popular applications for computer users. With the advent of Machine Learning (ML) and Deep Learning (DL), image and video editing have been progressively studied through several neural network architectures. Until very recently, most DL models for image and video editing were supervised and, more specifically, required the training data to contain pairs of input and output data to be used for learning the details of the desired transformation. Lately, end-to-end learning frameworks have been proposed, which require as input only a single image to learn the mapping to the desired edited output.

Video matting is a specific task belonging to video editing. The term “matting ” dates back to the 19th century when glass plates of matte paint were set in front of a camera during filming to create the illusion of an environment that was not present at the filming location. Nowadays, the composition of multiple digital images follows similar proceedings. A composite formula is exploited to shade the intensity of the foreground and background of each image, expressed as a linear combination of the two components.

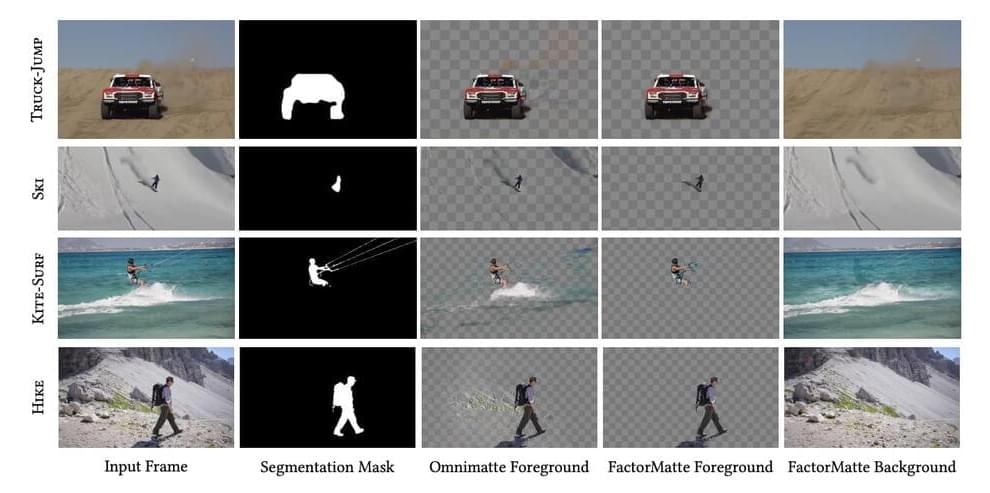

Although really powerful, this process has some limitations. It requires an unambiguous factorization of the image into foreground and background layers, which are then assumed to be independently treatable. In some situations like video matting, hence a sequence of temporal-and spatial-dependent frames, the layers decomposition becomes a complex task.