Here I solved ten Fourier and Laplace problem.laplace transformation.

inverse laplace transformation calculator.

laplace transformation calculator.

laplace transformation table.

inverse laplace transformation.

define laplace transformation.

laplace transformation chart.

laplace transformation differential equations.

laplace transformation examples.

laplace transformation calculator with steps.

how to do a laplace transformation.

application of laplace transformation.

laplace transformation application.

laplace transformation all formula.

laplace transformation ableitung.

laplace transform analysis gives.

laplace transform application in real life.

laplace transform all formulas pdf.

laplace transform and its applications pdf.

laplace transform and its properties.

laplace transform applications in engineering.

laplace transform and fourier series.

advantages of laplace transformation.

laplace transformation of e^at.

laplace transformation questions and answers.

laplace transformation of sin at.

laplace fourier and z transformation pdf.

laplace transformation book pdf.

laplace transform basic formula.

laplace transform basics.

laplace transform bessel function.

laplace transform by definition.

laplace transform btech notes.

laplace transform bsc 2nd year.

laplace transform bsc 2nd year pdf.

laplace transform by differentiation.

laplace transformation book.

laplace transformation bildfunktion.

transformation de laplace bibmath.

laplace transformation berechnen.

differentialgleichung laplace transformation beispiel.

differential equation by laplace transformation.

laplace transformation calculator wolfram.

laplace transformation cosh.

laplace transform circuit analysis.

laplace transform calculator piecewise.

laplace transform calculator with solutions.

laplace transform circuit analysis questions and answers.

laplace transform control systems.

laplace transformation of cosat.

inverse laplace transformation calculator with steps.

transformation de laplace exercices corrigés.

transformation de laplace exercices corrigés pdf.

transformation de laplace cours pdf.

laplace transformation definition.

laplace transformation derivative.

laplace transformation differentialgleichung.

laplace transform differential equation calculator.

laplace transform delta function.

laplace transform differential equations examples pdf.

laplace transform derivative formula.

laplace transform dirac delta.

define inverse laplace transformation.

diskrete laplace transformation.

doetsch handbuch der laplace-transformation.

dgl mit laplace transformation lösen.

derivatives of laplace transformation.

daniel jung laplace transformation.

differentialgleichung laplace transformation.

laplace transformation explained.

laplace transformation engineering mathematics.

laplace transformation elektrotechnik.

laplace transformation examples pdf.

laplace transformation engineering.

laplace transform examples and solutions pdf.

laplace transform examples and solutions.

laplace transform exercises.

laplace transform electrical circuit analysis.

eigenschaften der laplace transformation.

elektrotechnik laplace transformation.

einseitige laplace transformation.

e funktion laplace transformation.

eigenschaften laplace transformation.

laplace transformation formula.

laplace transformation formula sheet.

laplace transformation formula pdf.

laplace transformation for differential equation.

laplace transformation for dummies.

laplace transformation functions.

laplace transform formula list.

laplace transform first shifting theorem.

laplace transform final value theorem.

Category: mathematics

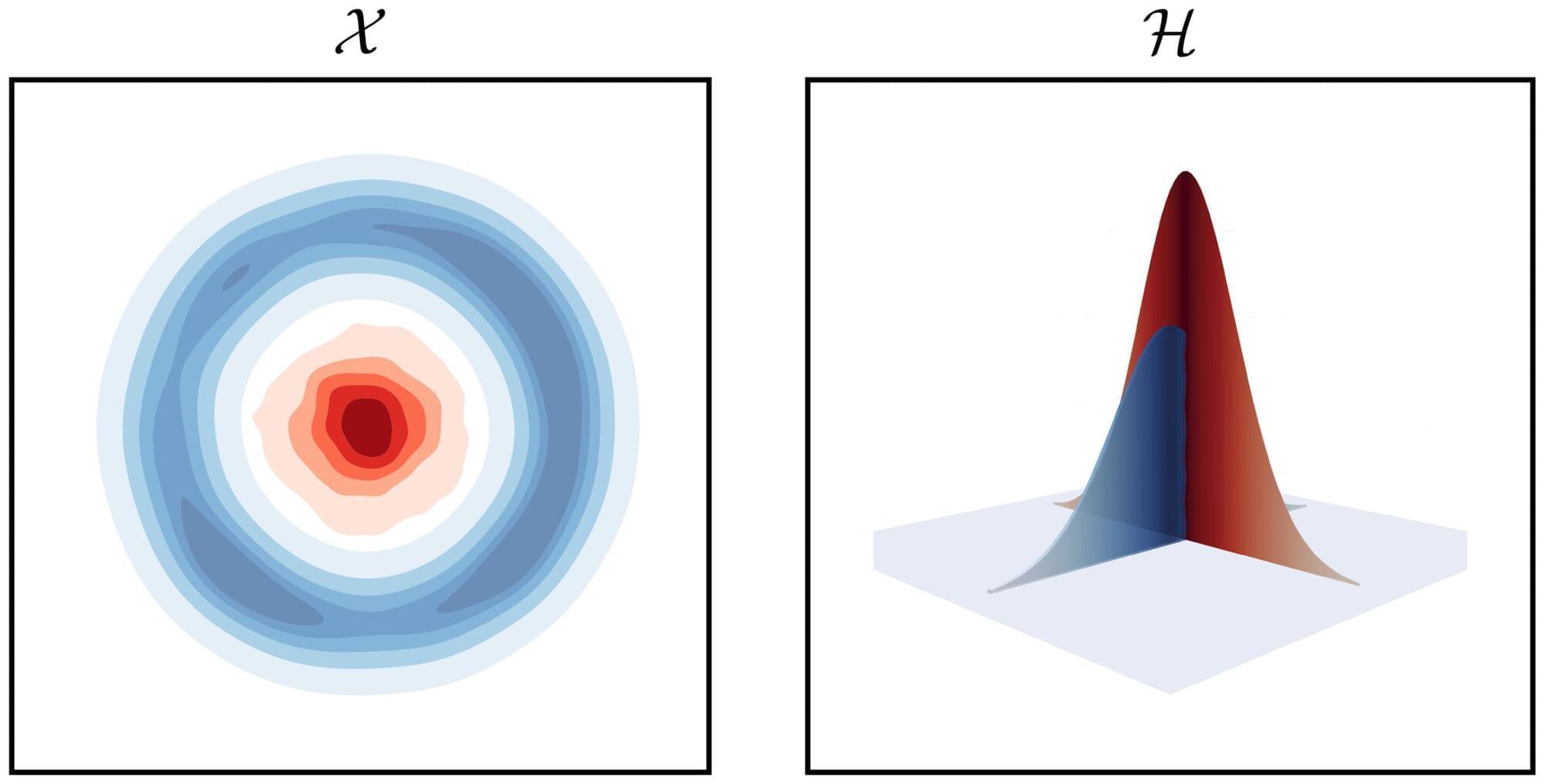

Hidden geometry explains why kernel methods separate complex data so well

Are two sets of data genuinely different, or is it because of randomness? This question, known as the two-sample testing problem, becomes notoriously difficult in modern datasets, because they are often high-dimensional, complex, and differences between them can take countless subtle forms.

“Simply put, we don’t know what differences to look for, the possibilities are bewildering,” says Professor Victor Panaretos at EPFL’s Institute of Mathematics.

To solve the problem, mathematicians have developed the so-called “kernel methods,” which have emerged as powerful solutions, widely used in fields such as genomics, finance, and artificial intelligence.

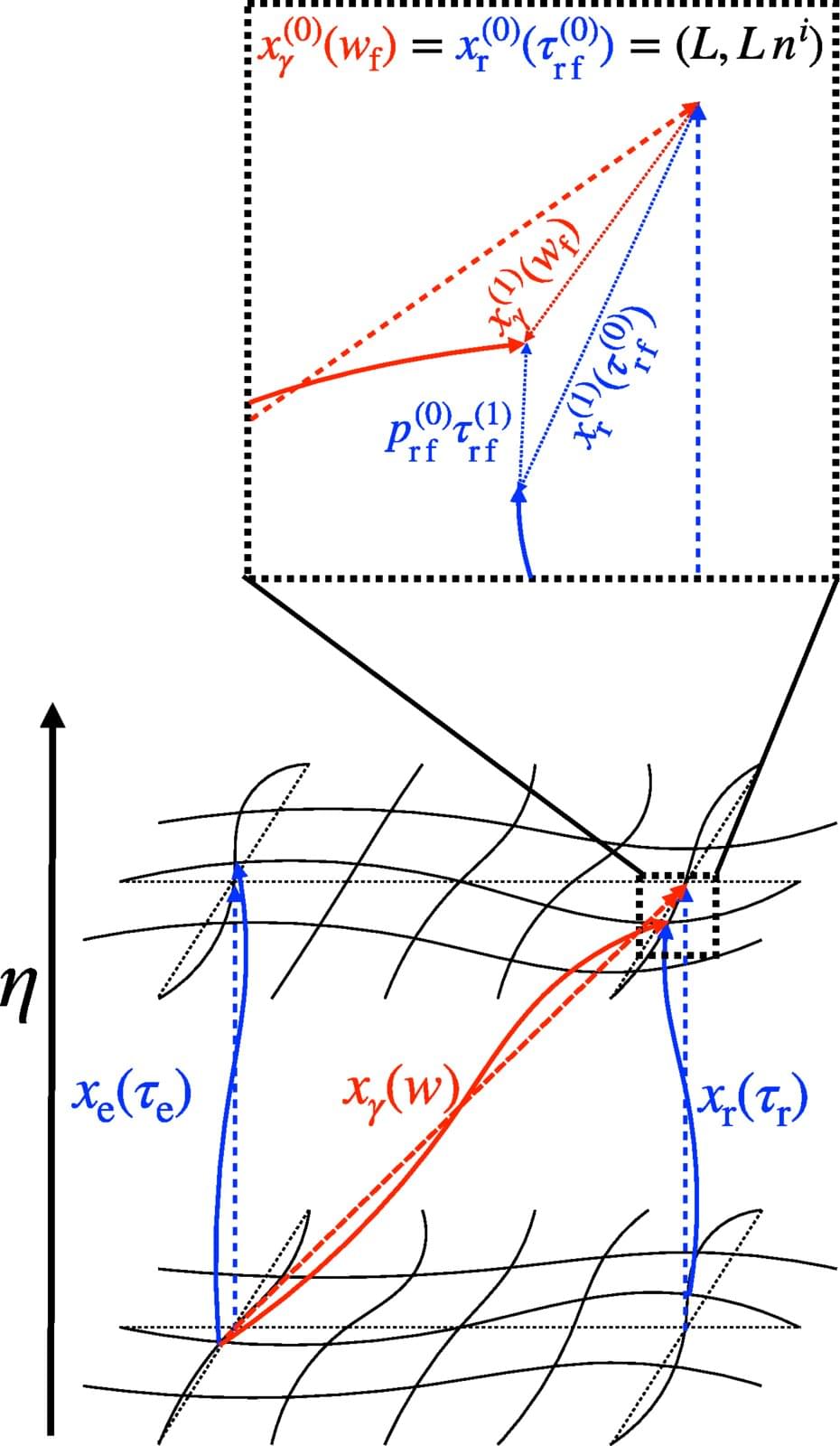

Measuring gravitational waves in a humming universe with a coordinate-free approach

Gravitational waves are tiny ripples in spacetime. Their first direct detection in 2015 marked a revolutionary moment in astronomy. Today, we have a thorough understanding of signals that travel far from their sources through quiet, nearly empty space, such as those emitted when black holes merge. In this case, the wave can be considered a minor disturbance on a silent background. The distinction between “background” and “wave” is clear, and the quantity measured by the detector—a tiny stretching and squeezing—is clearly determined.

In cosmology, however, things are more subtle. The focus shifts to the universe in its entirety—encompassing spacetime and everything contained within it, such as stars, black holes and galaxies. The background itself is dynamic. Small fluctuations in density and velocity gently stir spacetime everywhere, blurring the boundary with the wave.

But what exactly does a gravitational-wave detector measure when the entire universe is gently vibrating? Previously, theoretical predictions were entirely dependent on the choice of mathematical coordinates. However, the only meaningful quantity is what a real instrument records, which must be coordinate-independent.

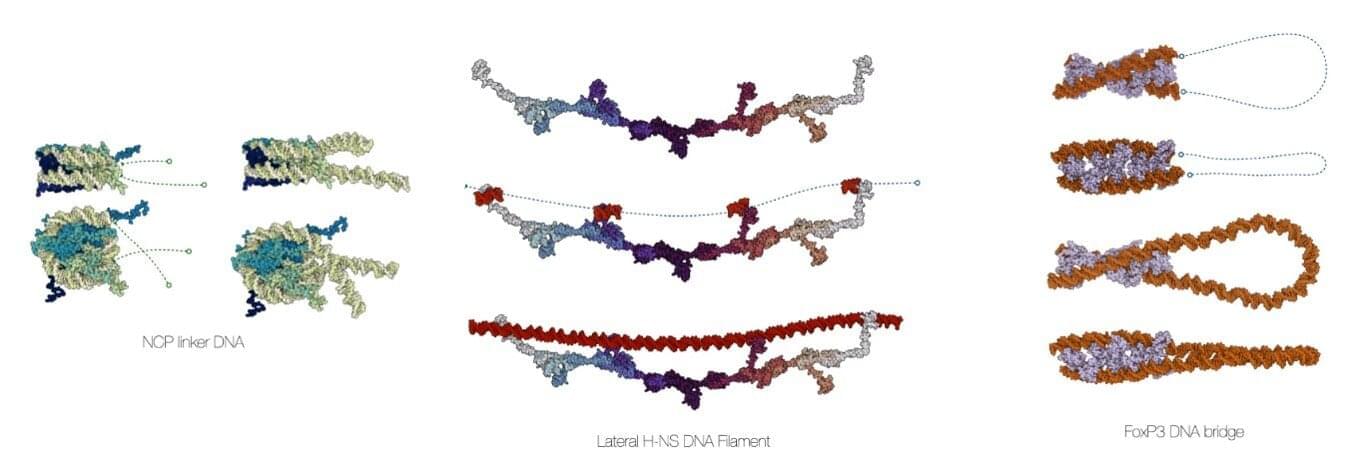

Open-source software unlocks rapid DNA structure generation and analysis in one workflow

Computational chemists at the University of Amsterdam’s Van ‘t Hoff Institute for Molecular Sciences have developed a comprehensive software suite to create accurate models of DNA in biomolecular assemblies. Called MDNA, the user-friendly molecular modeling toolkit helps biochemists, molecular biologists, bioinformaticians, and biophysicists to visualize and analyze DNA structures and perform accurate simulations.

The development of the MDNA suite, led by associate professor Jocelyne Vreede, has been presented in a paper in Nucleic Acids Research.

The software is open-source and publicly available through Figshare and Github. It is easily accessible, providing inspiration to any scientist with an interest in DNA. It has been thoroughly tested by students in mathematics, chemistry and biology, some of whom had hardly any programming experience.

Scientists identify a cell type in the brain that was previously ignored and it may explain why human memory has no known upper limit

The human brain contains roughly 86 billion neurons. That number appears in almost every popular account of memory and intelligence, and it tends to carry an implicit argument: that the scale of human cognition follows from the scale of this cell count. What is less often mentioned is that the brain contains a roughly comparable number of a different cell type entirely, one that researchers have treated, for most of the history of neuroscience, as little more than biological scaffolding.

A paper published on 23 May in the Proceedings of the National Academy of Sciences puts forward a new hypothesis about what those cells, called astrocytes, might actually be doing. The work comes from a team at MIT: lead author Leo Kozachkov, Jean-Jacques Slotine, a professor of mechanical engineering and brain and cognitive sciences, and Dmitry Krotov of the MIT-IBM Watson AI Lab, who is the paper’s senior author. Their claim is not that astrocytes have been misunderstood in any dramatic sense; it is the more careful suggestion that they may be doing computational work that neurons, on their own, cannot account for.

This is a hypothesis supported by a mathematical model. The experimental work needed to test it has not yet been done.

What If Scientists Already PROVED We’re In A Simulation?| Truth By Lisa Randall

If Scientists Already PROVED We’re In A Simulation?

Bell’s theorem. Maldacena’s holographic proof. Wheeler’s participatory universe.

Three independent bodies of peer-reviewed physics — all pointing at the same unsettling answer.

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━

Bell’s theorem. Maldacena’s holographic proof. Wheeler’s participatory universe.

Three independent bodies of peer-reviewed physics — all pointing at the same unsettling answer.

What if the simulation hypothesis isn’t a thought experiment? What if the physics we already have — quantum entanglement, the holographic principle, the measurement problem — is the proof?

In this video, Harvard theoretical physicist Lisa Randall walks through the three experiments and mathematical proofs that, taken together, describe a universe that functions in every measurable way like a simulation. Not as metaphor. As structure.

We cover:

→ Alain Aspect’s 1982 Bell test experiment and what it actually proved about local reality.

→ The Bekenstein-Hawking holographic bound — why information scales with surface area, not volume.

→ Maldacena’s AdS/CFT correspondence — the proof that a 3D universe is dual to a 2D information system.

→ Wheeler’s delayed choice experiment and the participatory universe.

→ What the fine-tuning problem looks like inside a simulation framework.

→ Why you — the observer — are not peripheral to the physics. You are part of the mechanism.

This is Episode 1 of The Proof Series — a weekly deep-dive into peer-reviewed science that challenges everything you think you know about reality.

New episode every Thursday.

— Lisa Randall is a theoretical physicist and professor at Harvard University, author of Warped Passages and Dark Matter and the Dinosaurs, and one of the most cited physicists alive.

#SimulationTheory #QuantumPhysics #HolographicUniverse.

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━

3. TIMESTAMPS / CHAPTERS

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━

00:00 — The proof nobody is talking about.

01:10 — What \

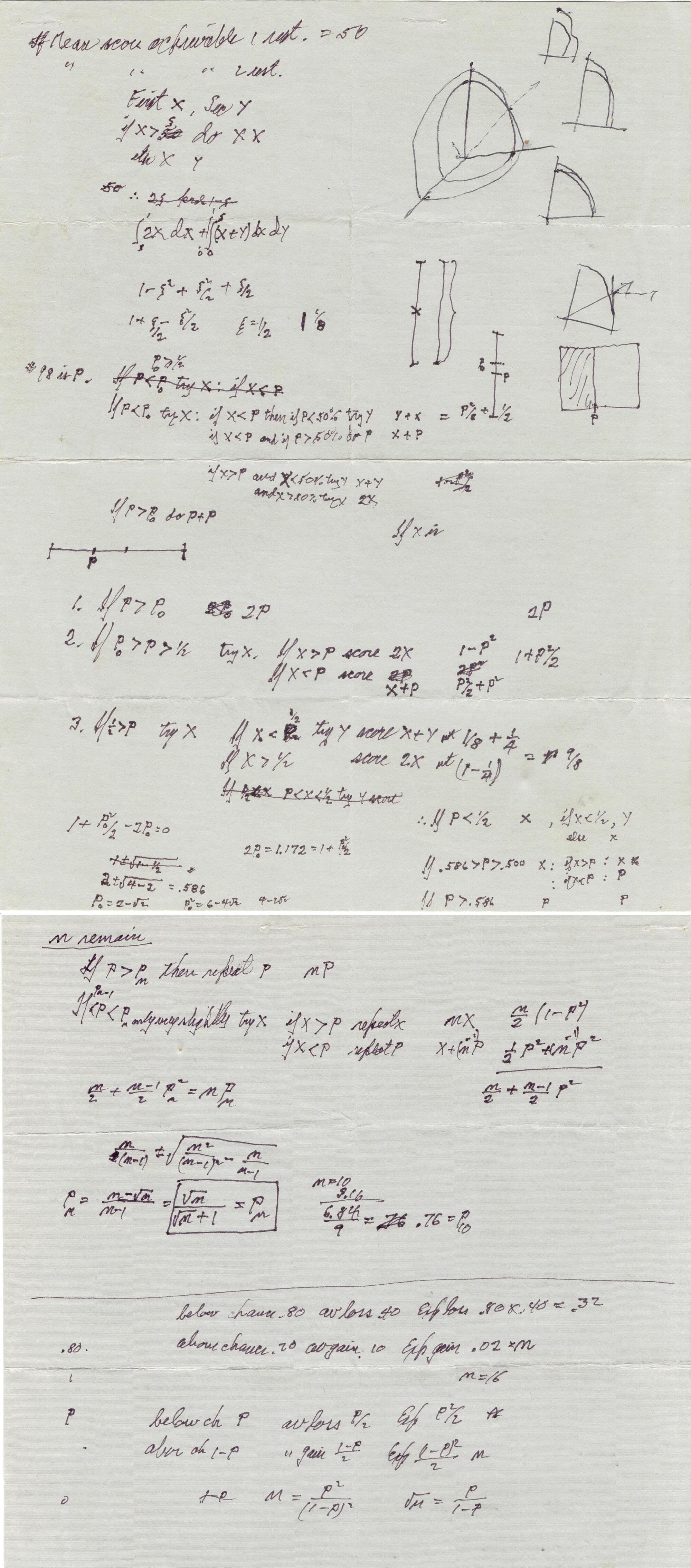

How a Richard Feynman formula could explain your dining habits in a new city

One of the dilemmas facing anyone in a new and unfamiliar city is where to dine out. You might consult guides, speak to locals, check reviews, and ultimately, try your luck. But if you’re there for a while, at some point you’re going to be asking yourself whether to visit new eateries or stick to the ones you’ve already tried and liked.

This is known as a classic explore-exploit dilemma and was something the late physicist and Nobel laureate Richard Feynman pondered during a restaurant meal with a friend in the 1970s. His companion was debating whether to order his favorite dish or try something new. Feynman turned the question into a math problem and solved it there and then, scribbling his workings on pieces of paper.

Feynman, who died in 1988, never published his solution, but researchers came across his handwritten notes and not only deciphered them, but also put the solution to the test.

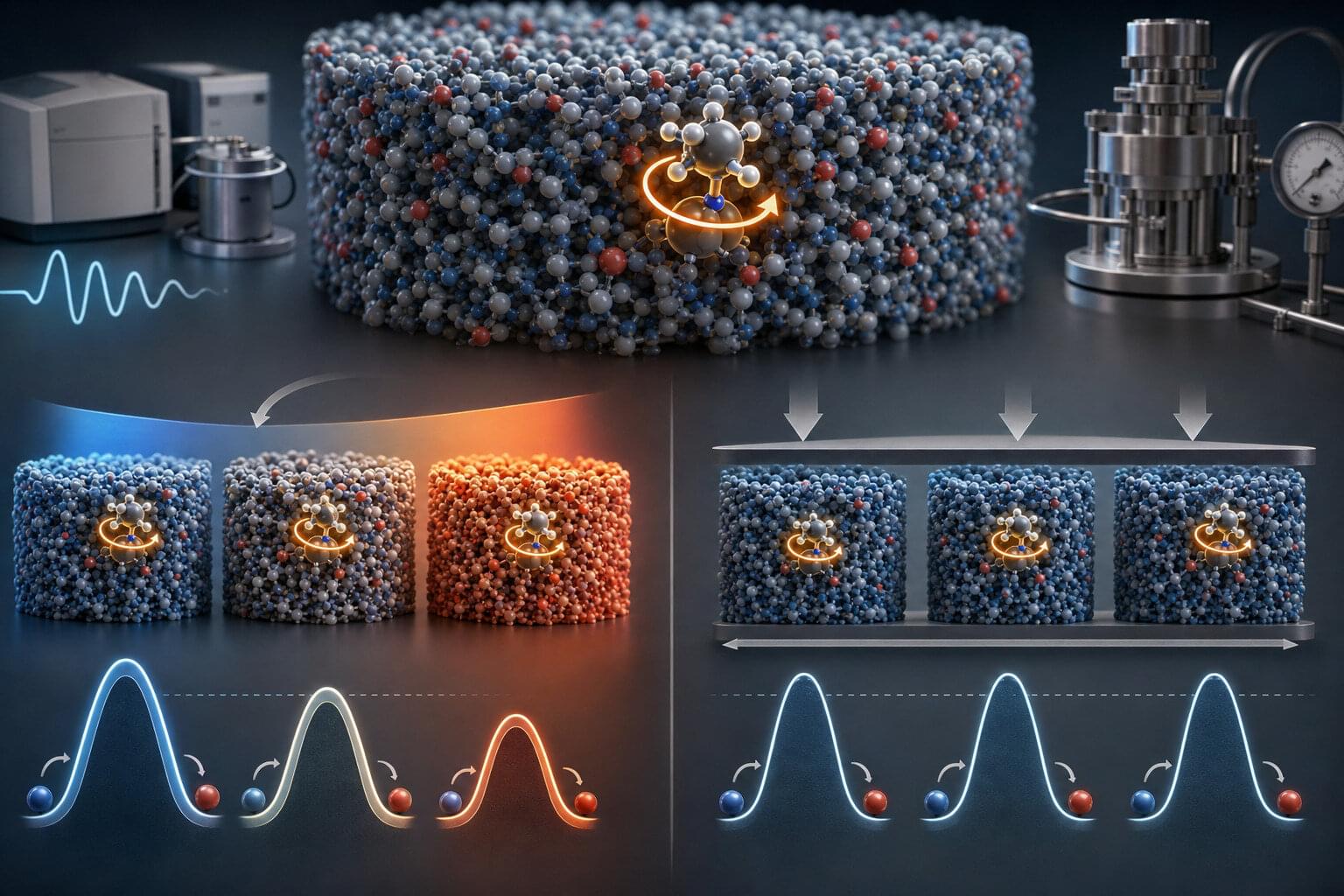

Molecular glasses solve long-standing Arrhenius paradox

Glasses are non-crystalline but solid states of matter in which molecules and atoms are not arranged into a regular crystal lattice, but rather in a disordered pattern. Glassy materials are widely used in various settings, for instance, in the synthesis of pharmaceuticals and the development of electronics or optical devices.

When studying movement and changes in various materials and substances, physicists commonly rely on the so-called Arrhenius model. This is a mathematical framework introduced by Svante Arrhenius in 1889, which can be used to calculate how temperature affects the speed of a heat-activated chemical reaction or physical process.

Past studies have shown that when the Arrhenius model is applied to molecular glasses, it yields unrealistically small pre-exponential factors. Pre-exponential factors are values that describe the intrinsic timescale of the movement of molecules without considering temperature effects.