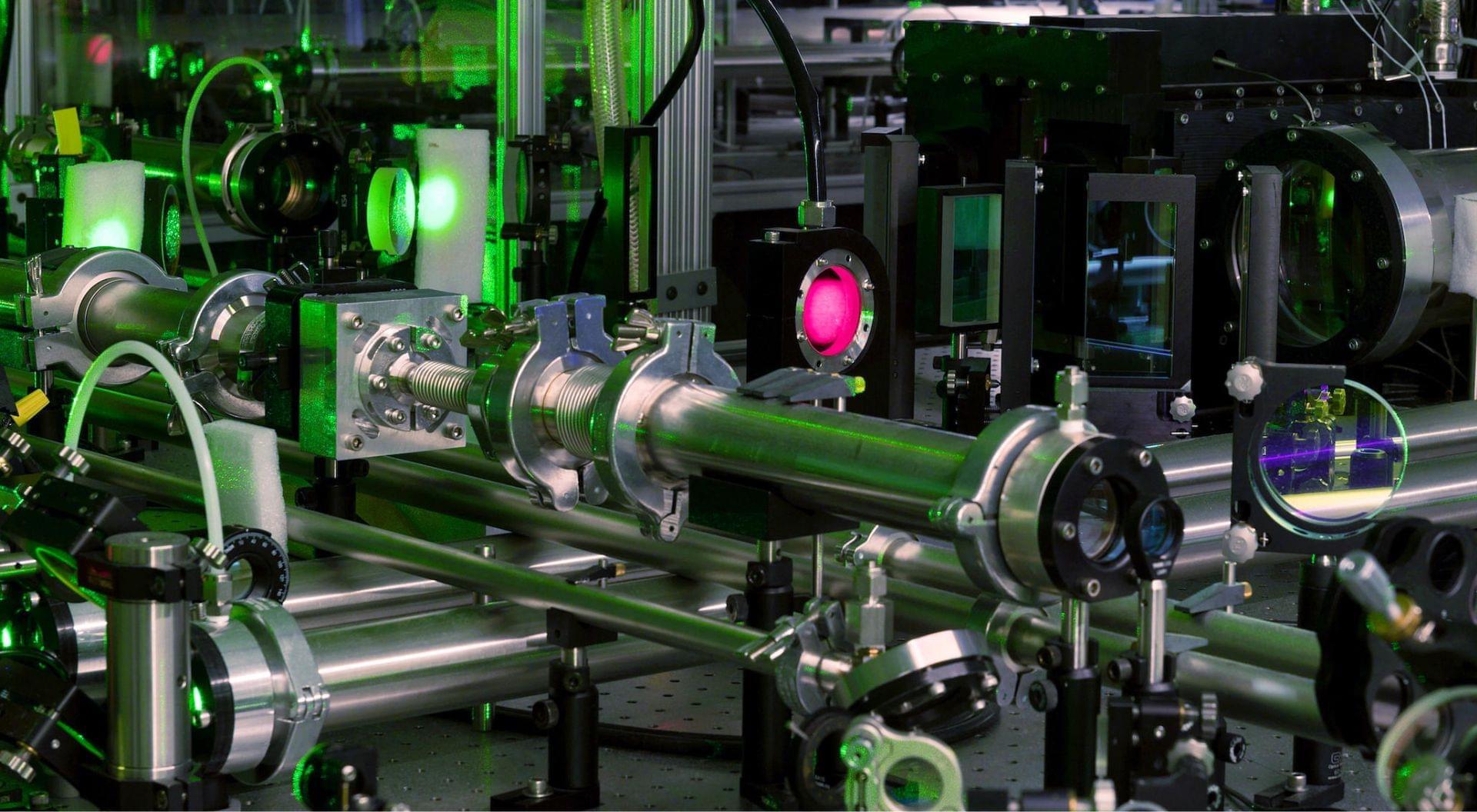

Using a novel simulation model based on machine learning, an international research team at GSI/FAIR has succeeded in gaining a deeper understanding of element formation in stellar events such as neutron star mergers. For the first time, the scientists used deep learning with a neural network to model the energy release during r-process nucleosynthesis in hydrodynamic simulations. The results are published in the journal Physical Review D.

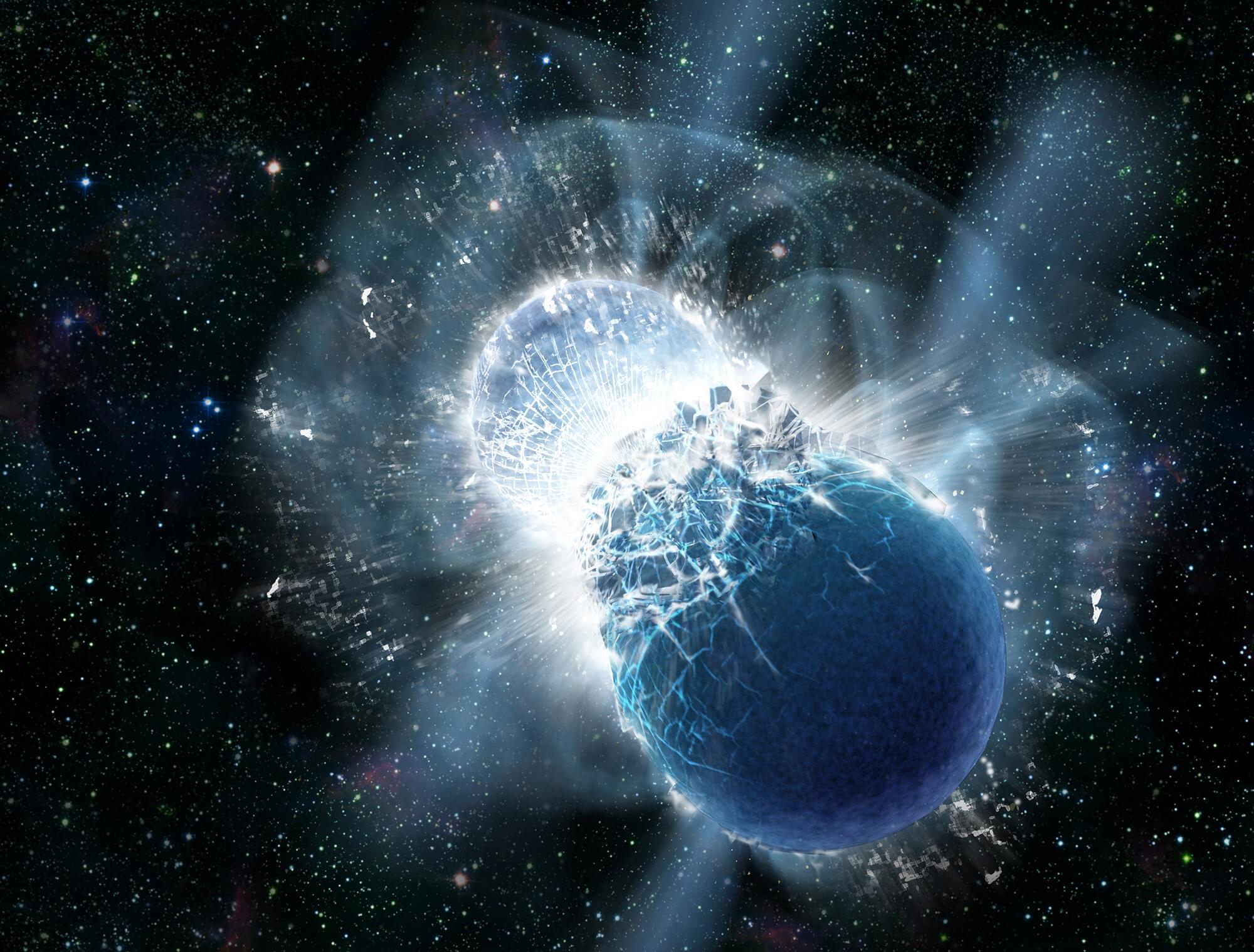

Many of the chemical elements we know are created in massive stellar events such as exploding stars or neutron star mergers. These events release incredible amounts of energy, allowing for the production of heavy nuclides. One key nuclear production process is the so-called rapid neutron-capture process, or r-process, in which free neutrons are captured by existing nuclei and converted into protons—thus creating larger, heavier atomic nuclei.

“Researchers around the world strive to make these complex reactions understandable through theoretical simulations. However, modeling all parameters requires incredible computing power, which is why the models often have to be simplified,” said Dr. Oliver Just, first author of the publication and a researcher in the Nuclear Astrophysics & Structure Department at GSI/FAIR. “Our new model, RHINE, which uses artificial intelligence, offers an efficient alternative.”