The scale of chemistry simulations with quantum computing has increased dramatically in just the last few months. In the latest milestone for the field, researchers from Cleveland Clinic, RIKEN, and IBM used a quantum-centric supercomputing (QCSC) framework to calculate the electronic structure of a pair of large protein-ligand complexes, reaching a scale of 12,635 atoms in the largest simulation.

The molecules were T4-Lysozyme, a protein from a family of proteins involved in the immune system degradation of peptidoglycans in bacterial membranes, and Trypsin, produced in the pancreas and used in digestion. The team simulated these proteins binding to molecules they interact with in nature and immersed in a liquid water solution, at scales of 11,608 atoms and 12,635 atoms respectively. Bringing together an international team of researchers from across the United States and Japan made it possible to develop the necessary algorithm and workflow enhancements to reach this milestone.

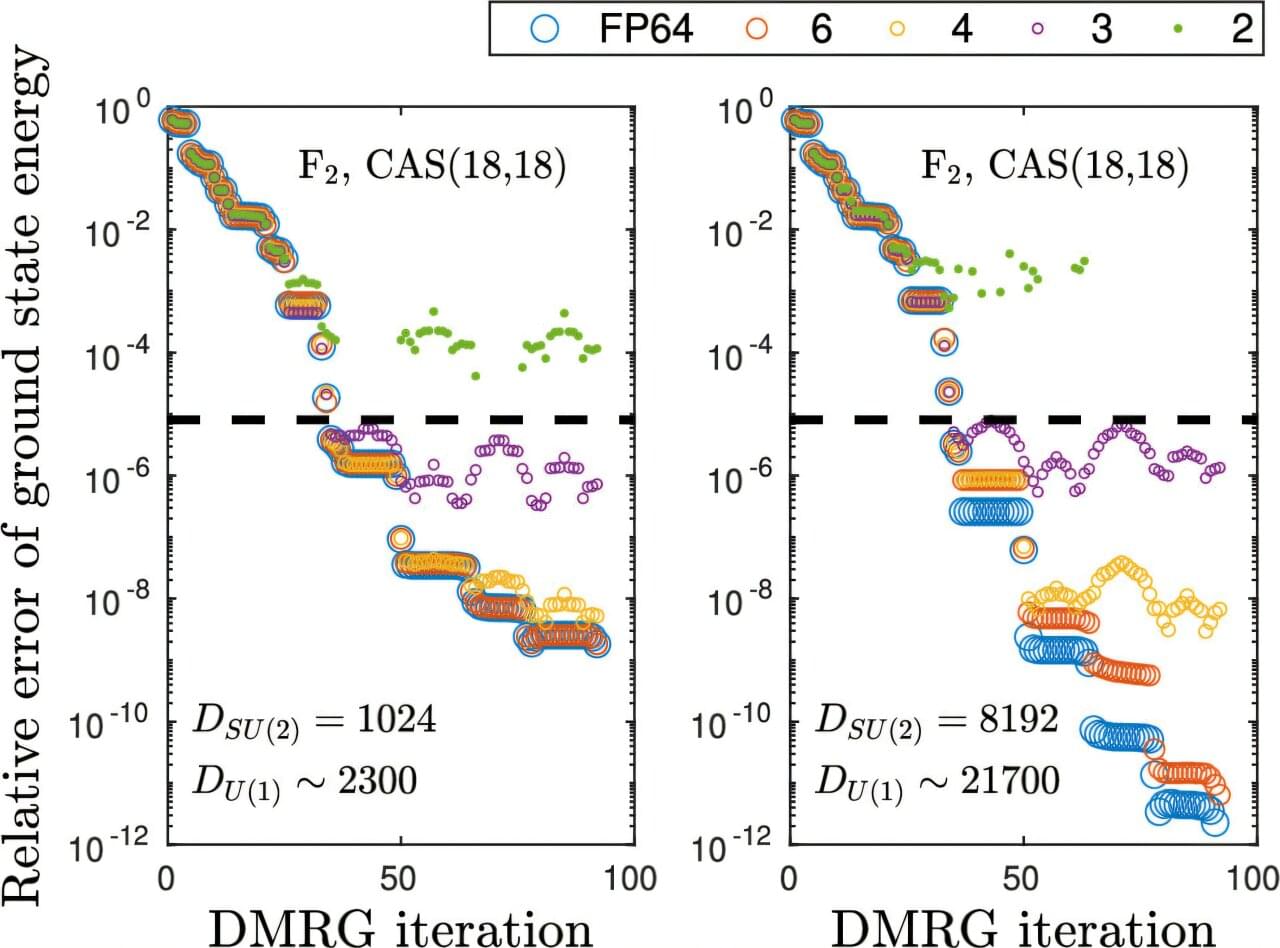

The researchers achieved this scale just four months after modeling the 303-atom miniprotein Trp-cage using quantum computing for the first time. Today’s new result not only demonstrates a 40-fold increase in system size compared to the Trp-cage result, it represents a 210-times improvement in accuracy from previous state-of-the-art QCSC approaches in a specific step of the workflow.