With $500 million in funding and a reported $2.5 billion valuation, Flourish wants to reinvent AI by putting real neurons under the microscope.

The problem? Human brains (and animal brains, too) are incredibly complex. While these handcrafted models are great starting points, they often oversimplify things and miss the messy, rich reality of actual behavior. On the flip side, using powerful, flexible AI to analyze data can capture that richness, but AI usually gives us a “black box”—it finds patterns but can’t explain *why* or *how* it found them, leaving scientists to do the heavy lifting of figuring out the rules.

Scientific models are widely used across the natural sciences as an interface between scientific theories and empirical data [1]. Such models play a key role, for example, in the study of human and animal learning, where they express algorithmic hypotheses and relate them to psychology and neuroscience data [2, 3]. These models are traditionally handcrafted by expert researchers based on existing theory or new insights. Such handcrafted models, however, are now known to fall short of capturing the full richness of behavior, even in their narrow domains [4– 7]. An alternative data-driven approach has emerged, seeking to discover new insights by fitting and interpreting flexible models [8– 11]. However, these tools require substantial human effort to derive insight from data, and it has been unclear how to discover new ideas from data efficiently. Here, we present DataDIVER, a general approach for automatically discovering computational models from data, and demonstrate that these models surface novel mechanistic insights into human and animal learning. Our approach delivers models that take the form of short computer programs, which are optimized both to fit data well and to be simple. These programs explicitly connect with existing theoretical frameworks and are readily understandable by human scientists. They can also be used to make novel predictions, some of which we show are borne out in re-analysis of existing data. General-purpose tools for surfacing new ideas from data, especially in combination with the large datasets that are increasingly available in many fields, stand to dramatically accelerate scientific discovery.

The authors have declared no competing interest.

Abstract Despite substantial progress in identifying neural correlates of consciousness, no unified quantitative framework currently derives a formally specified order parameter for conscious-state organisation from established neurophysiological principles, or links thalamocortical coordination dynamics to measurable state transitions across pharmacological, pathological, and perturbational conditions through a single computational formalism. We propose a neurocomputational theoretical framework in which conscious states are associated with metastable regimes of large-scale thalamocortical coordination operating near critical dynamical boundaries. The framework is formalised through a dynamic coordination functional Φ(t), defined as a surface integral over the thalamocortical interface and directly operationalisable from high-density EEG as a weighted combination of gamma-band power spectral density, thalamocortical coherence, and theta-gamma phase-amplitude coupling. The thalamic reticular nucleus (TRN) is identified as the anatomical implementation of the control parameter governing proximity to the critical point, grounded in a Wilson-Cowan model of TRN inhibitory gating whose bifurcation structure is characterised computationally. Numerical simulation of the linearised field equation on the thalamocortical boundary demonstrates internal consistency: the simulated system produces power-law recovery dynamics tau_rec proportional to | θ — θ _c|^v with nu consistent with model A universality class [0.5, 1.5], and a Kuramoto mean-field derivation establishes that Φ(t) emerges as the natural order parameter of coupled thalamocortical oscillators rather than being postulated. The joint (|Φ(t)|, Var[|Φ(t)|]) phase space correctly separates simulated waking, anaesthetic, ictal, and minimally conscious regimes without parameter fitting to empirical data. All simulation code is publicly available. Six quantitatively specific, independently falsifiable predictions are derived across five experimental domains: power-law Gamma Dip scaling in near-threshold EEG with a specific exponent range; causal disruption of thalamocortical coherence by selective TRN silencing; opposite EEG scaling exponent deviations in ASD versus schizophrenia; systematic Φ_est collapse under propofol anaesthesia correlated with PCI; Φ_est as a real-time consciousness biomarker in disorders of consciousness; and clinical validity of Φ_est in disorders of consciousness and ictal state discrimination by the metastability index. Each prediction is stated with quantitative thresholds and a pre-specified falsification criterion. The framework provides: the first anatomically specified and formally derived order parameter for conscious-state organisation directly operationalisable from passive EEG; a mechanistically grounded identification of the TRN as the dynamical control parameter, testable by a single optogenetic experiment; and a computationally validated, pre-registerable programme of six falsifiable predictions defining a tractable empirical agenda. Φ_est would constitute a candidate real-time consciousness biomarker if the framework’s predictions are confirmed in purpose-designed experiments.

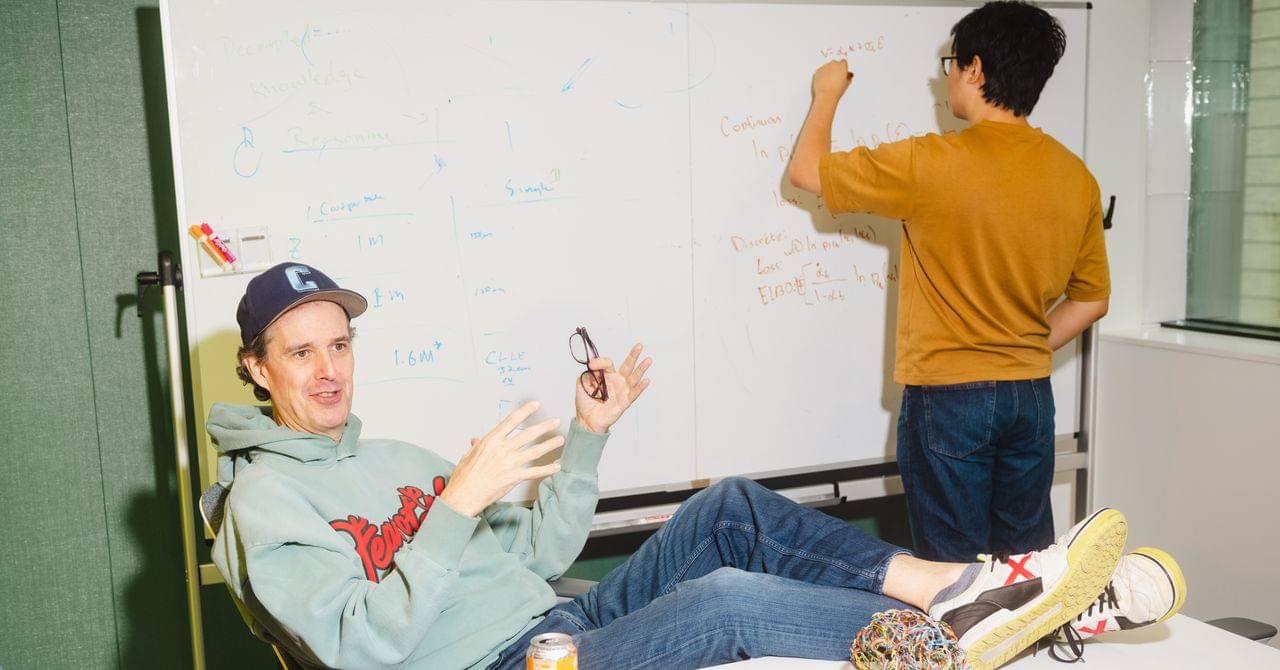

By definition, elementary particles can’t be broken into smaller pieces. But in a new theoretical study published in Physical Review Letters, Johannes Skaar and colleagues have revealed what would happen if you tried anyway for a single photon. The answer is deeply strange: attempting to cut a photon in two wouldn’t produce two smaller photons, but instead conjure an infinite number of them out of thin air.

Like any quantum particle, a photon exists simultaneously as a single, localized particle, and an extended wave, spread out across space. For their investigation, Skaar’s team considered what would happen if a single photon passed through an optical shutter—essentially a very fast mirror that can be switched on and off to block part of a pulse of light. If the shutter was fast enough, it could intercept the photon mid-pulse, snipping off part of this extended wave.

To find out what would happen afterward, the researchers applied quantum equations that describe how the photon’s underlying electromagnetic field behaves at the quantum level. Specifically, their analysis tracked precisely how the photon’s quantum state would be transformed by the shutter’s intervention.

The security of modern communications heavily relies on systems that can rapidly and reliably verify users and the devices they are using. This process, known as authentication, essentially entails confirming that users or devices are legitimate (i.e., who or what they claim to be).

Conventional authentication systems rely on static cryptographic keys, fixed digital keys that allow encryption algorithms to scramble readable data into unreadable texts or vice versa. While these systems perform well in some contexts, they often struggle when networks include billions of devices that continuously connect and disconnect.

Researchers at King Abdullah University of Science and Technology (KAUST) recently developed a new system that could authenticate devices faster and more reliably in real time, even when they are connecting to large-scale networks, cloud services or virtual environments.

Ilya Sutskever, co-founder of OpenAI and founder of Safe Superintelligence, says the scaling era from 2020 to 2025 is over, that pre-training will run out of data, and that the industry is back to pure research with more companies than ideas. He argues that AGI is the wrong target what is actually coming is a learning algorithm that can take any job, learn it on the fly, and merge that knowledge across millions of simultaneous instances in a way humans cannot, producing rapid economic growth that regulation is unlikely to stop.

He predicts that once AI becomes visibly powerful, frontier companies will become paranoid overnight and governments will scramble, and says the only thing worth building is an AI aligned to sentient life broadly — not human life alone — because the AI itself will be sentient and will vastly outnumber humans within 5 to 20 years.

📚 Sources cited in this video:

Safe Superintelligence Inc. – Company Overview https://ssi.inc.

OpenAI, Founding Charter and Mission https://openai.com/charter.

Ilya Sutskever, Google Scholar – Research Publications https://scholar.google.com/citations?…

⚠️ DISCLAIMER: This channel provides AI commentary and analysis for educational and informational purposes only. Views expressed by guests are their own and do not represent the positions of any company or institution. We encourage viewers to consult multiple sources and form their own conclusions. #ai #agi #artificialintelligence.

From that insight, Dirac built an entirely new formulation of the theory using what he called “q-numbers” (quantum numbers)—abstract quantities that don’t commute. He independently rediscovered aspects of Hilbert’s operator theory, though he preferred his own algebraic route because he found mathematicians’ obsession with convergence and existence theorems unappealing.

Paul Adrien Maurice Dirac (, dih-RAK ; [ 3 ] 8 August 1902 – 20 October 1984) was a British theoretical physicist who is considered to be one of the founders of quantum mechanics. [ 4 ] [ 5 ] Dirac laid the foundations for both quantum electrodynamics and quantum field theory, coining the former term. [ 6 ] [ 7 ] [ 8 ] [ 9 ] He was Lucasian Professor of Mathematics at the University of Cambridge from 1932 to 1969, and a professor of physics at Florida State University from 1970 to 1984. Dirac shared the 1933 Nobel Prize in Physics with Erwin Schrödinger “for the discovery of new productive forms of atomic theory.” [ 10 ]

Dirac graduated from the University of Bristol with a Bachelor of Science in Electrical Engineering in 1921, and a Bachelor of Arts in Mathematics in 1923. [ 11 ] Dirac then graduated from St John’s College, Cambridge, with a Doctor of Philosophy in Physics in 1926, writing the first ever thesis on quantum mechanics. [ 12 ]

He formulated the Dirac equation, one of the most important results in physics, in 1928. [ 7 ] It connected special relativity and quantum mechanics and predicted the existence of antimatter. [ 13 ] He wrote a famous paper in 1931, [ 14 ] which further predicted the existence of antimatter. [ 15 ] [ 16 ] [ 13 ] Dirac also contributed greatly to the reconciliation of general relativity with quantum mechanics. He contributed to Fermi–Dirac statistics, which describes the behaviour of fermions, particles with half-integer spin. His 1930 monograph, The Principles of Quantum Mechanics, is one of the most influential texts on the subject. [ 17 ] He and Schrödinger tied for eighth in a Physics World poll of the greatest physicists of all time. [ 18 ] .

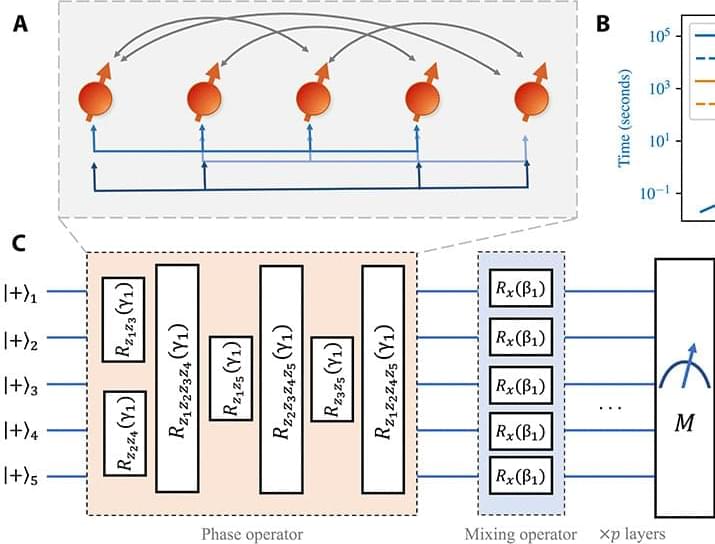

We study the scaling of QAOA TTS with the problem size on the low autocorrelation binary sequences (LABS) problem (15, 16), also known as the Bernasconi model in statistical physics (17, 18). The LABS problem has applications in communications engineering, where the low autocorrelation sequences are used for designing radar pulses (15, 19). To solve LABS, one has to produce a sequence of N bits that minimizes a specific quartic objective.

We choose LABS to study the scaling of QAOA TTS for the following three reasons. First, the complexity of LABS grows rapidly, with optimal solutions known only for N ≤ 66 and the best heuristics producing approximate solutions of quality decaying with N for N 200 (20, 21). This makes it a promising candidate problem, since only a few hundred qubits are required to tackle classically intractable instances. Second, the performance of classical solvers for LABS has been benchmarked (20, 21) in terms of the scaling of their TTS with problem size. Since optimal solutions are only known for N ≤ 66, the scaling of TTS for all classical solvers is obtained by fitting results for N ≤ 66. We reproduce these results and observe that that the scaling of classical solvers at N ≤ 40 matches the behavior for N up to 66 reported in the literature. This provides evidence that the scaling we observe for QAOA at N ≤ 40 will similarly extrapolate to larger N. Third, LABS has only one instance per problem size N. Combined with the hardness of LABS, this makes it possible to reliably study the scaling of QAOA at large problem sizes, where simulating tens or hundreds of random instances would be computationally infeasible.

We obtain the scaling by performing noiseless exact simulation of QAOA with fixed schedules. Our results are enabled by a custom algorithm-specific graphics processing unit (GPU) simulator (22), which we execute using up to 1,024 GPUs per simulation on the Polaris supercomputer accessed through the Argonne Leadership Computing Facility. We find that the TTS of QAOA with number of layers p = 12 grows as 1.46N, which is improved to 1.21N if combined with quantum minimum finding. This scaling is better than that of the best classical heuristic, which has a TTS that grows as 1.34N. We note that we do not propose any new quantum algorithms in this work. Instead, we study a general quantum optimization heuristic with broad applicability (namely, QAOA) and make no specific modifications to adapt it to the LABS problem.

Mathematicians are challenging the idea that dark energy is responsible for the accelerating expansion of the universe. In a new paper published in Proceedings of the Royal Society A, mathematicians from the University of California, Davis, provide mathematical proof that instabilities inherent in the Einstein-Euler equations imply that the current model of the expanding universe is not viable.

The Einstein-Euler equations are a union of general relativity and fluid dynamics equations used to model astronomical phenomena such as galaxies, black holes, and cosmic expansion.

The research directly challenges the Lambda-cold dark matter model, the standard cosmological model of the Big Bang.

In physics, quantum tunnel ling, barrier penetration, or simply tunnel ling is a quantum mechanical phenomenon in which an object such as an electron or atom passes through a potential energy barrier that, according to classical mechanics, should not be passable due to the object not having sufficient energy to pass or surmount the barrier.

Tunnelling is a consequence of the wave nature of matter and quantum indeterminacy. The quantum wave function describes the states of a particle or other physical system and wave equations such as the Schrödinger equation describe their evolution. In a system with a short, narrow potential barrier, a small part of wavefunction can appear outside of the barrier representing a probability for tunnel ling through the barrier.

Since the probability of transmission of a wave packet through a barrier decreases exponentially with the barrier height, the barrier width, and the tunnel ling particle’s mass, tunnel ling is seen most prominently in low-mass particles such as electrons tunnel ling through atomically narrow barriers. However tunnel ling has been observed with protons and even atoms and tunnel ling has been used to explain physical effects with particles this large.