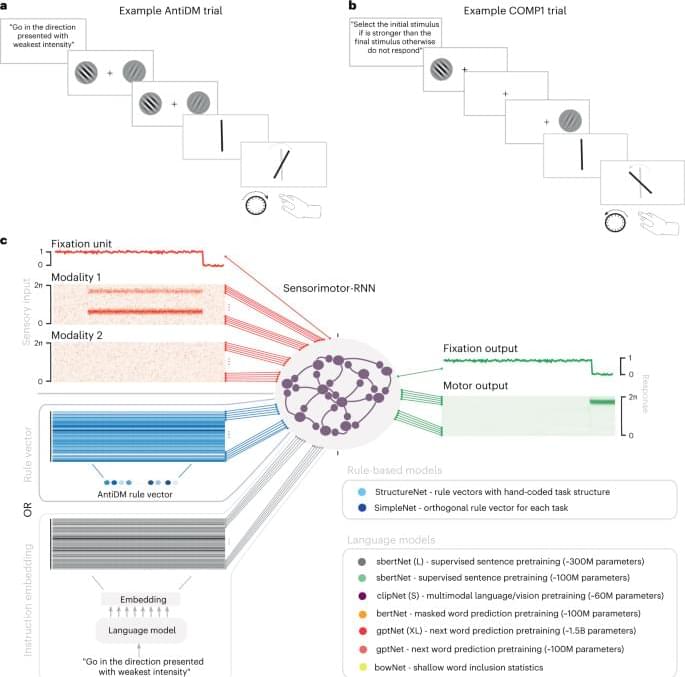

In this study, we use the latest advances in natural language processing to build tractable models of the ability to interpret instructions to guide actions in novel settings and the ability to produce a description of a task once it has been learned. RNNs can learn to perform a set of psychophysical tasks simultaneously using a pretrained language transformer to embed a natural language instruction for the current task. Our best-performing models can leverage these embeddings to perform a brand-new model with an average performance of 83% correct. Instructed models that generalize performance do so by leveraging the shared compositional structure of instruction embeddings and task representations, such that an inference about the relations between practiced and novel instructions leads to a good inference about what sensorimotor transformation is required for the unseen task. Finally, we show a network can invert this information and provide a linguistic description for a task based only on the sensorimotor contingency it observes.

Our models make several predictions for what neural representations to expect in brain areas that integrate linguistic information in order to exert control over sensorimotor areas. Firstly, the CCGP analysis of our model hierarchy suggests that when humans must generalize across (or switch between) a set of related tasks based on instructions, the neural geometry observed among sensorimotor mappings should also be present in semantic representations of instructions. This prediction is well grounded in the existing experimental literature where multiple studies have observed the type of abstract structure we find in our sensorimotor-RNNs also exists in sensorimotor areas of biological brains3,36,37. Our models theorize that the emergence of an equivalent task-related structure in language areas is essential to instructed action in humans. One intriguing candidate for an area that may support such representations is the language selective subregion of the left inferior frontal gyrus. This area is sensitive to both lexico-semantic and syntactic aspects of sentence comprehension, is implicated in tasks that require semantic control and lies anatomically adjacent to another functional subregion of the left inferior frontal gyrus, which is implicated in flexible cognition38,39,40,41. We also predict that individual units involved in implementing sensorimotor mappings should modulate their tuning properties on a trial-by-trial basis according to the semantics of the input instructions, and that failure to modulate tuning in the expected way should lead to poor generalization. This prediction may be especially useful to interpret multiunit recordings in humans. Finally, given that grounding linguistic knowledge in the sensorimotor demands of the task set improved performance across models (Fig. 2e), we predict that during learning the highest level of the language processing hierarchy should likewise be shaped by the embodied processes that accompany linguistic inputs, for example, motor planning or affordance evaluation42.

One notable negative result of our study is the relatively poor generalization performance of GPTNET (XL), which used at least an order of magnitude more parameters than other models. This is particularly striking given that activity in these models is predictive of many behavioral and neural signatures of human language processing10,11. Given this, future imaging studies may be guided by the representations in both autoregressive models and our best-performing models to delineate a full gradient of brain areas involved in each stage of instruction following, from low-level next-word prediction to higher-level structured-sentence representations to the sensorimotor control that language informs.