A compass always points north—or does it? Magnets normally maintain a stable direction of magnetization, pointing from south to north (S→N). However, this direction can change under strong magnetic fields or heat. For example, a compass placed near a strong magnet may no longer point in the right direction.

Magnets can also lose their magnetism when exposed to high levels of heat. This isn’t just relevant to wayfinding during your camping trips—if the magnets in hard drives and memory storage devices are affected, it could mean losing all of your precious data.

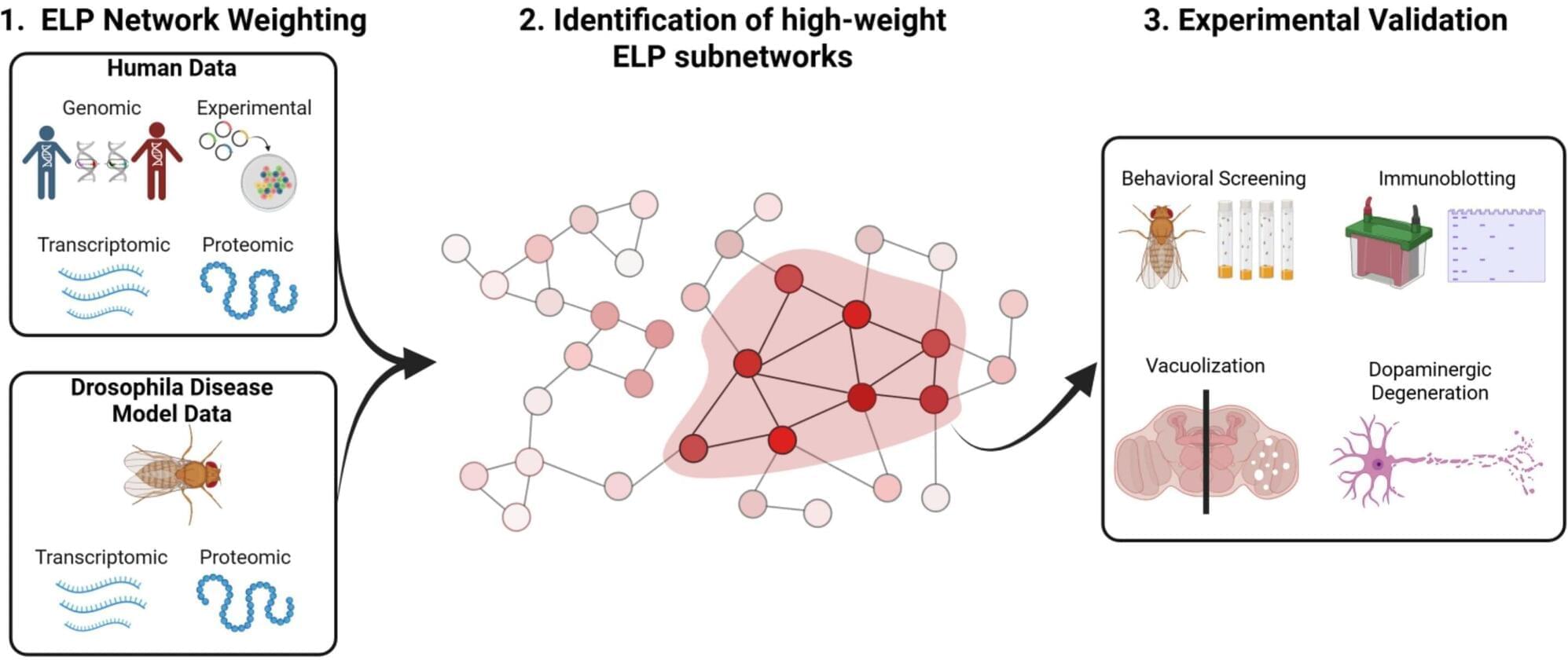

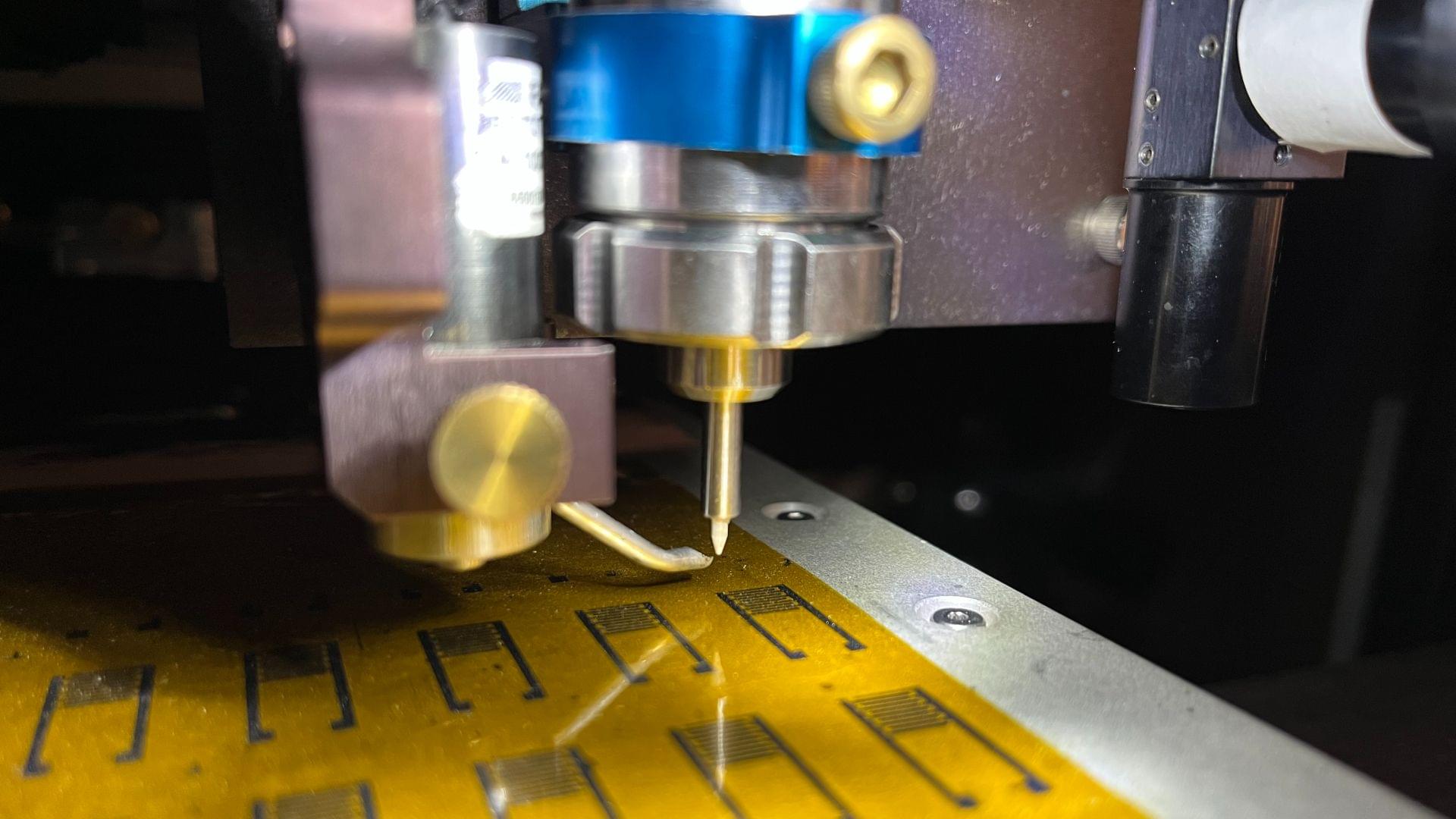

Researchers at Tohoku University sought to better understand the intricate ways in which this thermally-activated switching occurs in nanomagnets, and successfully measured it experimentally for the very first time. The results are published in Communications Materials.