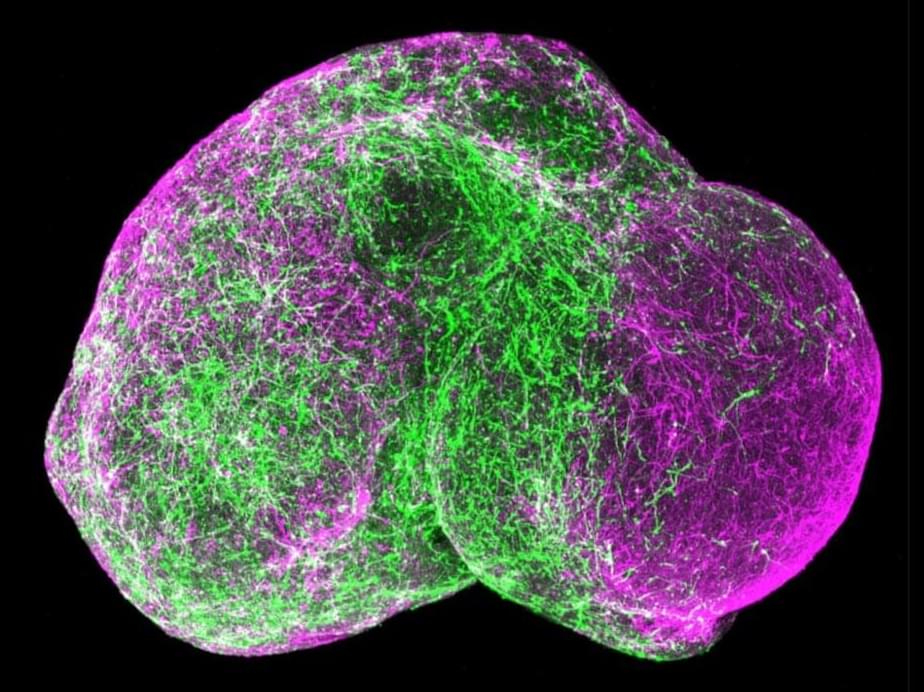

Among pressing ethical concerns are whether brain organoids can feel pain or become conscious—and how would we know?

In the 21st century, new powerful technologies, such as different artificial intelligence (AI) agents, have become omnipresent and the center of public debate. With the increasing fear of AI agents replacing humans, there are discussions about whether individuals should strive to enhance themselves. For instance, the philosophical movement Transhumanism proposes the broad enhancement of human characteristics such as cognitive abilities, personality, and moral values (e.g., Grassie and Hansell 2011; Ranisch and Sorgner 2014). This enhancement should help humans to overcome their natural limitations and to keep up with powerful technologies that are increasingly present in today’s world (see Ranisch and Sorgner 2014). In the present article, we focus on one of the most frequently discussed forms of enhancement—the enhancement of human cognitive abilities.

Not only in science but also among the general population, cognitive enhancement, such as increasing one’s intelligence or working memory capacity, has been a frequently debated topic for many years (see Pauen 2019). Thus, a lot of psychological and neuroscientific research investigated different methods to increase cognitive abilities, but—so far—effective methods for cognitive enhancement are lacking (Jaušovec and Pahor 2017). Nevertheless, multiple different (and partly new) technologies that promise an enhancement of cognition are available to the general public. Transhumanists especially promote the application of brain stimulation techniques, smart drugs, or gene editing for cognitive enhancement (e.g., Bostrom and Sandberg 2009). Importantly, only little is known about the characteristics of individuals who would use such enhancement methods to improve their cognition. Thus, in the present study, we investigated different predictors of the acceptance of multiple widely-discussed enhancement methods. More specifically, we tested whether individuals’ psychometrically measured intelligence, self-estimated intelligence, implicit theories about intelligence, personality (Big Five and Dark Triad traits), and specific interests (science-fiction hobbyism) as well as values (purity norms) predict their acceptance of cognitive enhancement (i.e., whether they would use such methods to enhance their cognition).

A new study published in Applied Psychology provides evidence that the belief in free will may carry unintended negative consequences for how individuals view gay men. The findings suggest that while believing in free will often promotes moral responsibility, it is also associated with less favorable attitudes toward gay men and preferential treatment for heterosexual men. This effect appears to be driven by the perception that sexual orientation is a personal choice.

Psychological research has historically investigated the concept of free will as a positive force in social behavior. Scholars have frequently observed that when people believe they have control over their actions, they tend to act more responsibly and helpfully. The general assumption has been that a sense of agency leads to adherence to moral standards. However, the authors of the current study argued that this sense of agency might have a “dark side” when applied to social groups that are often stigmatized.

The researchers reasoned that if people believe strongly in human agency, they may incorrectly attribute complex traits like sexual orientation to personal decision-making. This attribution could lead to the conclusion that gay men are responsible for their sexual orientation.

“Feral” gossip spread via AI bots is likely to become more frequent and pervasive, causing reputational damage and shame, humiliation, anxiety, and distress, researchers have warned.

Chatbots like ChatGPT, Claude, and Gemini don’t just make things up—they generate and spread gossip, complete with negative evaluations and juicy rumors that can cause real-world harm, according to new analysis by philosophers Joel Krueger and Lucy Osler from the University of Exeter.

The research is published in the journal Ethics and Information Technology.

As the geopolitical climate shifts, we increasingly hear warmongering pronouncements that tend to resurrect popular sentiments we naïvely believed had been buried by history. Among these is the claim that Europe is weak and cowardly, unwilling to cross the threshold between adolescence and adulthood. Maturity, according to this narrative, demands rearmament and a head-on confrontation with the challenges of the present historical moment. Yet beneath this rhetoric lies a far more troubling transformation.

We are witnessing a blatant attempt to replace the prevailing moral framework—until recently ecumenically oriented toward a passive and often regressive environmentalism—with a value system founded on belligerence. This new morality defines itself against “enemies” of presumed interests, whether national, ethnic, or ideological.

Those who expected a different kind of shift—one that would abandon regressive policies in favor of an active, forward-looking environmentalism—have been rudely awakened. The self-proclaimed revolutionaries sing an old and worn-out song: war. These new “futurists” embrace a technocratic faith that goes far beyond a legitimate trust in science and technology—long maligned during the previous ideological era—and descends into open contempt for human beings themselves, now portrayed as redundant or even burdensome in the age of the supposedly unstoppable rise of artificial intelligence.

Researchers Rachel Ruttan and Katherine DeCelles of the University of Toronto’s Rotman School of Management are anything but neutral on neutrality. The next time you’re tempted to play it safe on a hot-button topic, their evidence-based advice is to consider saying what you really think.

That’s because their recent research, based on more than a dozen experiments with thousands of participants, reveals that people take a dim view of others’ professed neutrality on controversial issues, rating them just as morally suspect as those expressing an opposing viewpoint, if not worse.

“Neutrality gives you no advantage over opposition,” says Prof. Ruttan, an associate professor of organizational behavior and human resource management with an interest in moral judgment and prosocial behavior. “You’re not pleasing anyone.”

There is no such thing as a society where everyone is equal. That is the key message of new research that challenges the romantic ideal of a perfectly egalitarian human society.

Anthropologist Duncan Stibbard-Hawkes and his colleague Chris von Rueden reviewed extensive evidence, such as ethnographic accounts and detailed field observation from contemporary groups often viewed as egalitarian, that is, where everyone is equal in power, wealth and status.

These included the Tanzanian Hadza, the Malay Batek and the Kalahari!Kung. They were prompted by general confusion over how to define “egalitarianism” and the desire to debunk the idea of the “noble savage,” which suggests that non-primitive people live moral, peaceful lives close to nature.

AI companies are explicitly working toward AGI and are likely to succeed soon, possibly within years. Keep the Future Human explains how unchecked development of smarter-than-human, autonomous, general-purpose AI systems will almost inevitably lead to human replacement. But it doesn’t have to. Learn how we can keep the future human and experience the extraordinary benefits of Tool AI…

https://keepthefuturehuman.ai.

Chapters:

0:00 – The AI Race.

1:31 – Extinction Risk.

2:35 – AGI When?

4:08 – AI Will Take Power.

5:12 – Good News.

6:52 – Bad News.

8:11 – More Money, More Capability.

10:10 – AI-Assisted Alignment?

11:32 – Obedient vs Sovereign AI

12:53 – AI Is a New Lifeform.

Original Videos:

• Keep the Future Human (with Anthony Aguirre)

• AI Ethics Series | Ethical Horizons in Adv…

Editing:

https://zeino.tv/

The journey “Up from Eden” could involve humanity’s growth in understanding, comprehending and appreciating with greater love true and wisdom, shaping a future worth living for.

AI is accelerating faster than human biology. What happens to humanity when the future moves faster than we can evolve?

Oxford philosopher Nick Bostrom, author of Superintelligence, says we are entering the biggest turning point in human history — one that could redefine what it means to be human.

In this talk, Bostrom explains why AI might be the last invention humans ever make, and how the next decade could bring changes that once took thousands of years in health, longevity, and human evolution. He warns that digital minds may one day outnumber biological humans — and that this shift could change everything about how we live and who we become.

Superintelligence will force us to choose what humanity becomes next.