PhonlamaiPhoto/iStock.

The Hubbard Model

Were you unable to attend Transform 2022? Check out all of the summit sessions in our on-demand library now! Watch here.

For decades, enterprises have jury-rigged software designed for structured data when trying to solve unstructured, text-based data problems. Although these solutions performed poorly, there was nothing else. Recently, though, machine learning (ML) has improved significantly at understanding natural language.

Unsurprisingly, Silicon Valley is in a mad dash to build market-leading offerings for this new opportunity. Khosla Ventures thinks natural language processing (NLP) is the most important technology trend of the next five years. If the 2000s were about becoming a big data-enabled enterprise, and the 2010s were about becoming a data science-enabled enterprise — then the 2020s are about becoming a natural language-enabled enterprise.

The past may be a fixed and immutable point, but with the help of machine learning, the future can at times be more easily divined.

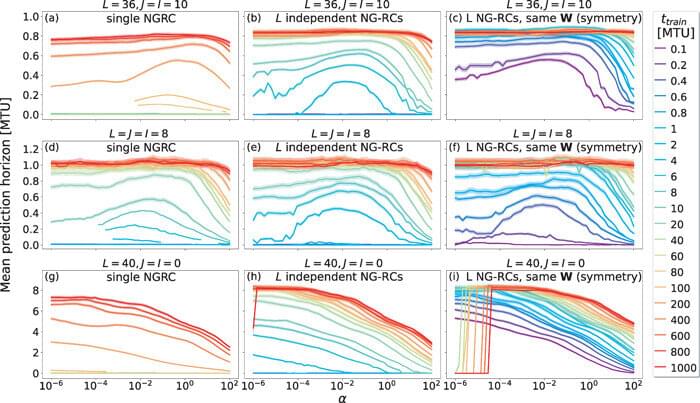

Using a new type of machine learning method called next generation reservoir computing, researchers at The Ohio State University have recently found a new way to predict the behavior of spatiotemporal chaotic systems—such as changes in Earth’s weather—that are particularly complex for scientists to forecast.

The study, published today in the journal Chaos: An Interdisciplinary Journal of Nonlinear Science, utilizes a new and highly efficient algorithm that, when combined with next generation reservoir computing, can learn spatiotemporal chaotic systems in a fraction of the time of other machine learning algorithms.

This post is also available in:  עברית (Hebrew)

עברית (Hebrew)

As everyday technologies get more and more advanced, cyber security must be at the forefront of every customer. Cyber security services have become common and are often used by private companies and the public sector in order to protect themselves from potential cyber attacks.

One of these services goes under the name Darktrace and has recently been acquired by Cybersprint, a Dutch provider of advanced cyber security services and a manufacturer of special tools that use machine learning algorithms to detect cyber vulnerabilities. Based on attack path modeling and graph theory, Darktrace’s platform represents organizational networks as directional, weighted graphs with nodes where multi-line segments meet and edges where they join. In order to estimate the probability that an attacker will be able to successfully move from node A to node B, a weighted graph can be used. Understanding the insights gained will make it easier for Darktrace to simulate future attacks.

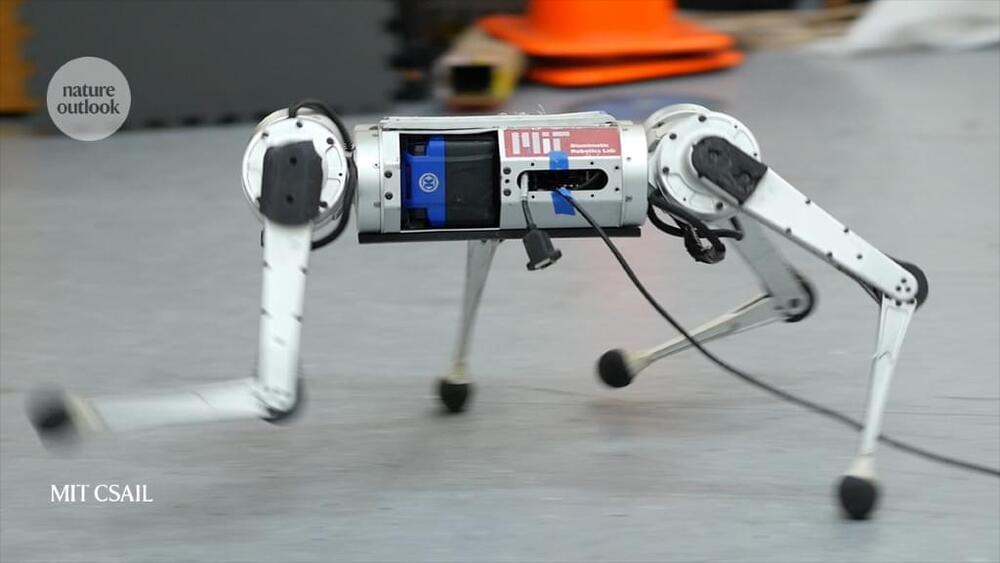

Such robotic schools could be tasked with locating and recording data on coral reefs to help researchers to study the reefs’ health over time. Just as living fish in a school might engage in different behaviours simultaneously — some mating, some caring for young, others finding food — but suddenly move as one when a predator approaches, robotic fish would have to perform individual tasks while communicating to each other when it’s time to do something different.

“The majority of what my lab really looks at is the coordination techniques — what kinds of algorithms have evolved in nature to make systems work well together?” she says.

Many roboticists are looking to biology for inspiration in robot design, particularly in the area of locomotion. Although big industrial robots in vehicle factories, for instance, remain anchored in place, other robots will be more useful if they can move through the world, performing different tasks and coordinating their behaviour.

Neural networks are learning algorithms that approximate the solution to a task by training with available data. However, it is usually unclear how exactly they accomplish this. Two young Basel physicists have now derived mathematical expressions that allow one to calculate the optimal solution without training a network. Their results not only give insight into how those learning algorithms work, but could also help to detect unknown phase transitions in physical systems in the future.

Neural networks are based on the principle of operation of the brain. Such computer algorithms learn to solve problems through repeated training and can, for example, distinguish objects or process spoken language.

For several years now, physicists have been trying to use neural networks to detect phase transitions as well. Phase transitions are familiar to us from everyday experience, for instance when water freezes to ice, but they also occur in more complex form between different phases of magnetic materials or quantum systems, where they are often difficult to detect.

Meta unveiled its Make-a-Scene text-to-image generation AI in July, which like Dall-E and Midjourney, utilizes machine learning algorithms (and massive databases of scraped online artwork) to create fantastical depictions of written prompts. On Thursday, Meta CEO Mark Zuckerberg revealed Make-a-Scene’s more animated contemporary, Make-a-Video.

As its name implies, Make-a-Video is, “a new AI system that lets people turn text prompts into brief, high-quality video clips,” Zuckerberg wrote in a Meta blog Thursday. Functionally, Video works the same way that Scene does — relying on a mix of natural language processing and generative neural networks to convert non-visual prompts into images — it’s just pulling content in a different format.

“Our intuition is simple: learn what the world looks like and how it is described from paired text-image data, and learn how the world moves from unsupervised video footage,” a team of Meta researchers wrote in a research paper published Thursday morning. Doing so enabled the team to reduce the amount of time needed to train the Video model and eliminate the need for paired text-video data, while preserving “the vastness (diversity in aesthetic, fantastical depictions, etc.) of today’s image generation models.”

This year’s Breakthrough Prize in Life Sciences has a strong physical sciences element. The prize was divided between six individuals. Demis Hassabis and John Jumper of the London-based AI company DeepMind were awarded a third of the prize for developing AlphaFold, a machine-learning algorithm that can accurately predict the 3D structure of proteins from just the amino-acid sequence of their polypeptide chain. Emmanuel Mignot of Stanford University School of Medicine and Masashi Yanagisawa of the University of Tsukuba, Japan, were awarded for their work on the sleeping disorder narcolepsy.

The remainder of the prize went to Clifford Brangwynne of Princeton University and Anthony Hyman of the Max Planck Institute of Molecular Cell Biology and Genetics in Germany for discovering that the molecular machinery within a cell—proteins and RNA—organizes by phase separating into liquid droplets. This phase separation process has since been shown to be involved in several basic cellular functions, including gene expression, protein synthesis and storage, and stress responses.

The award for Brangwynne and Hyman shows “the transformative role that the physics of soft matter and the physics of polymers can play in cell biology,” says Rohit Pappu, a biophysicist and bioengineer at Washington University in St. Louis. “[The discovery] could only have happened the way it did: a creative young physicist working with an imaginative cell biologist in an ecosystem where boundaries were always being pushed at the intersection of multiple disciplines.”

Open source is fertile ground for transformative software, especially in cutting-edge domains like artificial intelligence (AI) and machine learning. The open source ethos and collaboration tools make it easier for teams to share code and data and build on the success of others.

This article looks at 13 open source projects that are remaking the world of AI and machine learning. Some are elaborate software packages that support new algorithms. Others are more subtly transformative. All of them are worth a look.

A new longevity focused venture capital fund is preparing to announce its first investments, as it seeks to accelerate commercialisation in the field. Joining the likes of Maximon, Apollo and Korify, New York’s Life Extension Ventures (LifeX) has put together a $100 million fund specifically for companies developing solutions to extend the longevity of both humans and our planet. In a slight twist, the fund is predominantly looking to invest in companies that are leveraging software and data at the heart of their efforts to hasten the adoption of scientific breakthroughs in longevity.

Longevity. Technology: The longevity field is alive with innovation, and developments in AI and Big Data are just some of the software-led technologies driving progress throughout the sector. Co-founded by scientists-turned-entrepreneurs, Amol Sarva and Inaki Berenguer, LifeX Ventures’ investment philosophy draws on their combined experiences building software-led companies across a wide range of sectors. We caught up with Sarva to learn more.

Between them Sarva, a cognitive scientist by training, and Berenguer have led and/or founded several startups, such as CoverWallet, Virgin Mobile USA and Halo Neuroscience. The two have also invested personally in more than 150 startups before their interest turned more recently to longevity.