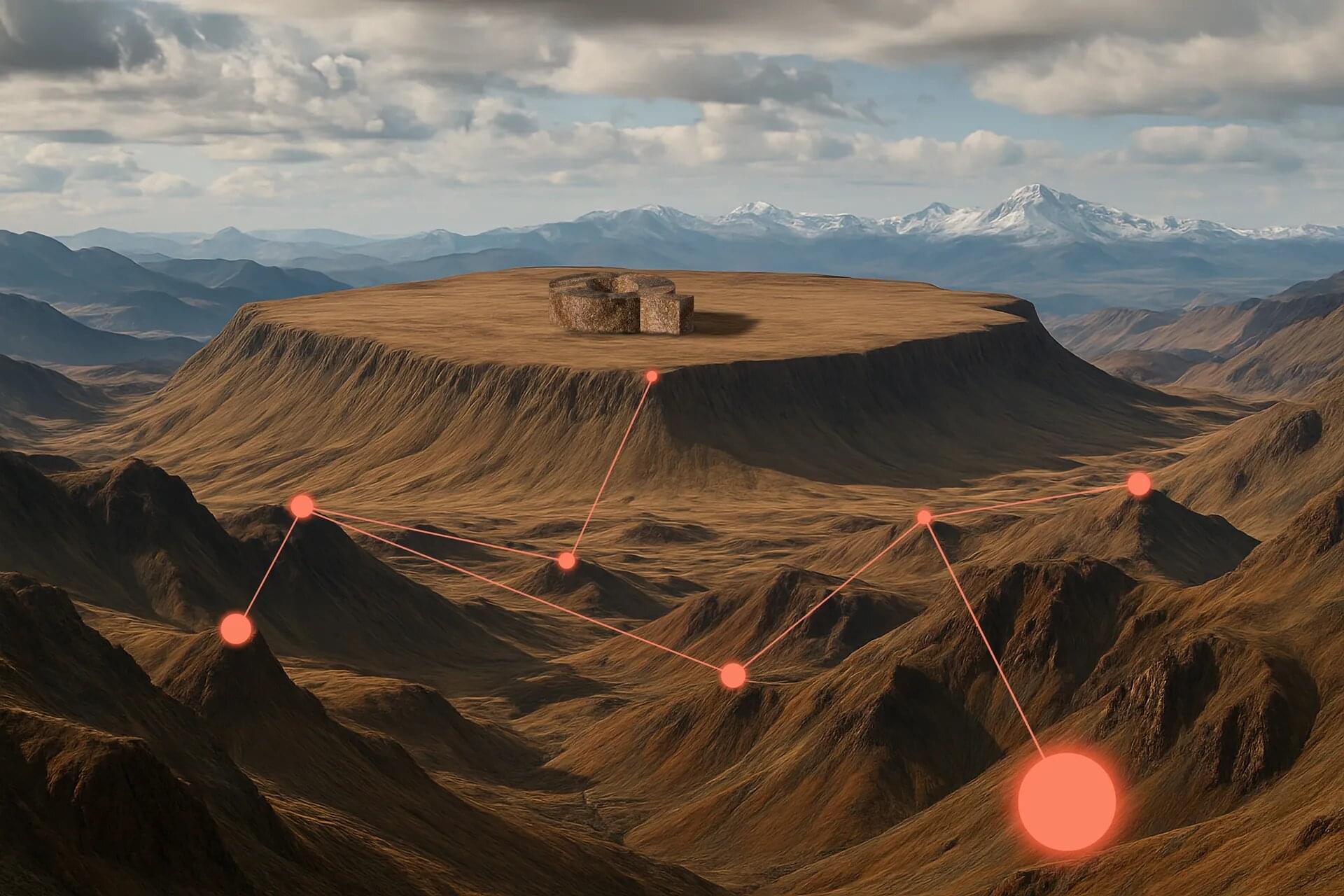

Binary neutron star mergers, cosmic collisions between two very dense stellar remnants made up predominantly of neutrons, have been the topic of numerous astrophysics studies due to their fascinating underlying physics and their possible cosmological outcomes. Most previous studies aimed at simulating and better understanding these events relied on computational methods designed to solve Einstein’s equations of general relativity under extreme conditions, such as those that would be present during neutron star mergers.

Researchers at the Max Planck Institute for Gravitational Physics (Albert Einstein Institute), Yukawa Institute for Theoretical Physics, Chiba University, and Toho University recently performed the longest simulation of binary neutron star mergers to date, utilizing a framework for modeling the interactions between magnetic fields, high-density matter and neutrinos, known as the neutrino-radiation magnetohydrodynamics (MHD) framework.

Their simulation, outlined in Physical Review Letters, reveals the emergence of a magnetically dominated jet from the merger, followed by the collapse of the binary neutron star system into a black hole.