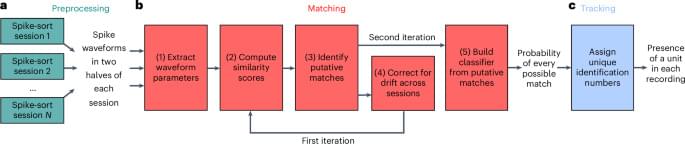

The Hubbard model is a studied model in condensed matter theory and a formidable quantum problem. A team of physicists used deep learning to condense this problem, which previously required 100,000 equations, into just four equations without sacrificing accuracy. The study, titled “Deep Learning the Functional Renormalization Group,” was published on September 21 in Physical Review Letters.

Dominique Di Sante is the lead author of this study. Since 2021, he holds the position of Assistant Professor (tenure track) at the Department of Physics and Astronomy, University of Bologna. At the same time, he is a Visiting Professor at the Center for Computational Quantum Physics (CCQ) at the Flatiron Institute, New York, as part of a Marie Sklodowska-Curie Actions (MSCA) grant that encourages, among other things, the mobility of researchers.

He and colleagues at the Flatiron Institute and other international researchers conducted the study, which has the potential to revolutionize the way scientists study systems containing many interacting electrons. In addition, if they can adapt the method to other problems, the approach could help design materials with desirable properties, such as superconductivity, or contribute to clean energy production.