A new way of mapping activity and connections between different regions of the brain has revealed fresh insights into how higher order functions like language, thought and attention, are organized.

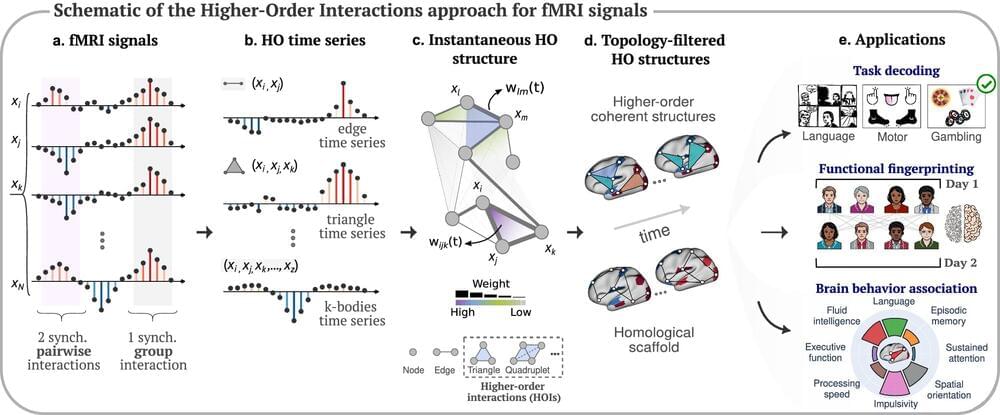

Traditional models of human brain activity represent interactions in pairs between two different brain regions. This is because modeling methods have not developed sufficiently to describe more complex interactions between multiple regions.

A new approach, developed by researchers at the University of Birmingham is capable of taking signals measured through neuroimaging, and creating accurate models from these to show how different brain regions are contributing to specific functions and behaviors. The results are published in Nature Communications.