Quantum computers, systems that process information leveraging quantum mechanical effects, could soon outperform classical computers on some complex computational problems. These computers rely on qubits, units of quantum information that share states with each other via a quantum mechanical effect known as entanglement.

Qubits are highly susceptible to noise in their surroundings, which can disrupt their quantum states and lead to computation errors. Quantum engineers have thus been trying to devise effective strategies to achieve fault-tolerant quantum computation, or in other words, to correct errors that arise when quantum computers process information.

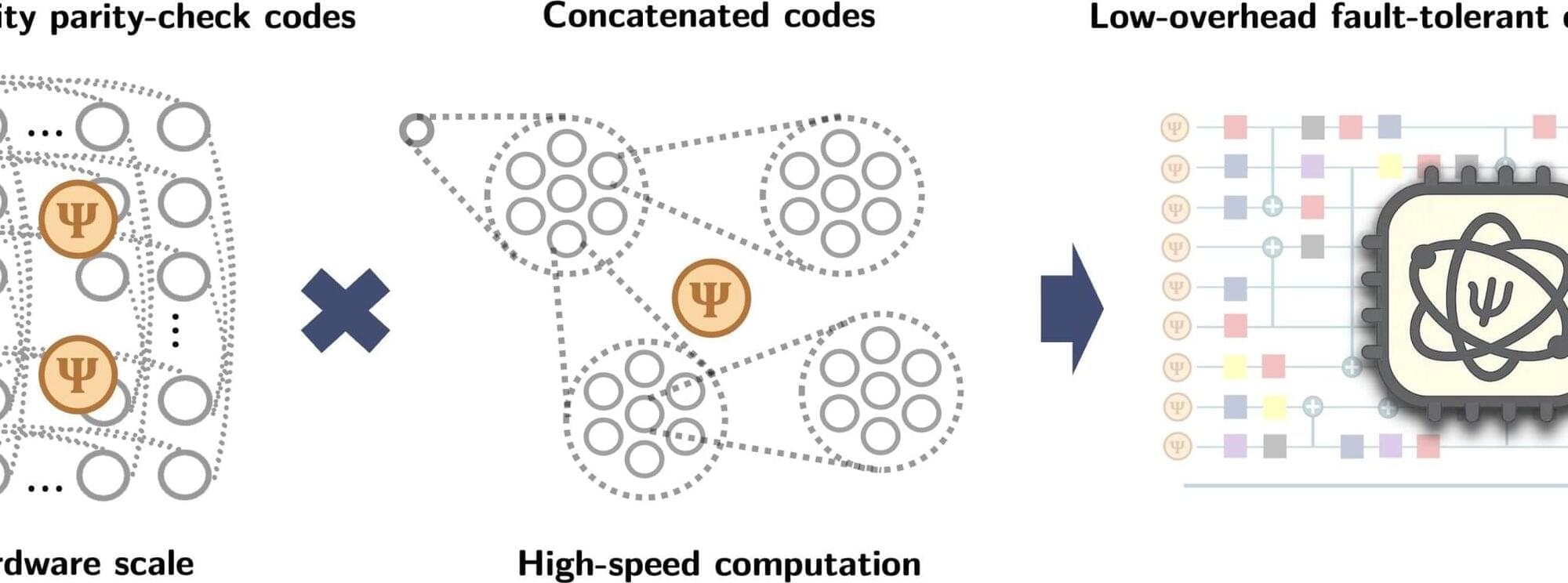

Existing approaches work either by reducing the extra number of physical qubits needed per logical qubit (i.e., space overhead) or by reducing the number of physical operations needed to perform a single logical operation (i.e., time overhead). Effectively tackling both these goals together, which would enable more scalable systems and faster computations, has so far proved challenging.