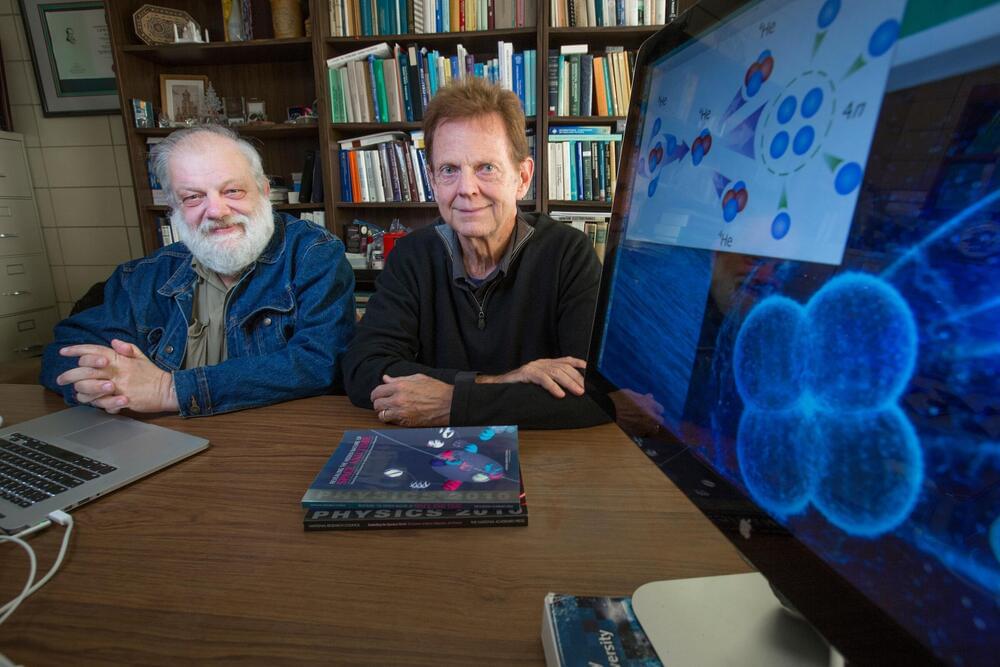

James Vary has been waiting for nuclear physics experiments to confirm the reality of a “tetraneutron” that he and his colleagues theorized, predicted and first announced during a presentation in the summer of 2014, followed by a research paper in the fall of 2016.

“Whenever we present a theory, we always have to say we’re waiting for experimental confirmation,” said Vary, an Iowa State University professor of physics and astronomy.

In the case of four neutrons (very, very) briefly bound together in a temporary quantum state or resonance, that day for Vary and an international team of theorists is now here.