Some models are available to users without Chinese phone numbers, while open-source platforms provide other workarounds.

A Mormon Transhumanist has trained a chatbot that was trained on his entire collection of writings, internet social media posts, and presentations.

I’ve merged with artificial intelligence. Well, I’m working on it. And I’m excited to share the results with you.

! Trained on everything that I’ve written publicly since 2000, he might be better at Mormon Transhumanism than I am.

Friends, I’m excited to introduce you to LincGPT! This artificial intelligence, built on the OpenAI platform, is designed to engage with you on topics related to technological evolution, postsecular religion, and Mormon Transhumanism. I’ve trained LincGPT on all of my public writings since the year 2000. That includes the following:

An extremely cool application of large language models in combination with other AI tools such as models for text-to-speech and speech-to-text, image recognition and captioning, etc.

We created a robot tour guide using Spot integrated with Chat GPT and other AI models to explore the robotics applications of foundational models.

Joscha Bach meets with Ben Goertzel to discuss cognitive architectures, AGI, and conscious computers in another theolocution on TOE.

- Patreon: / curtjaimungal (early access to ad-free audio episodes!)

- Crypto: https://tinyurl.com/cryptoTOE

- PayPal: https://tinyurl.com/paypalTOE

- Twitter: / toewithcurt.

- Discord Invite: / discord.

- iTunes: https://podcasts.apple.com/ca/podcast…

- Pandora: https://pdora.co/33b9lfP

- Spotify: https://open.spotify.com/show/4gL14b9…

- Subreddit r/TheoriesOfEverything: / theoriesofeverything.

- TOE Merch: https://tinyurl.com/TOEmerch.

LINKS MENTIONED:

- OpenCog (Ben’s AI company): https://opencog.org.

- SingularityNET (Ben’s Decentralized AI company): https://singularitynet.io.

- Podcast w/ Joscha Bach on TOE: • Joscha Bach: Time, Simulation Hypothe…

- Podcast w/ Ben Goertzel on TOE: • Ben Goertzel: The Unstoppable Rise of…

- Podcast w/ Michael Levin and Joscha on TOE: • Michael Levin Λ Joscha Bach: Collecti…

- Podcast w/ John Vervaeke and Joscha on TOE: • Joscha Bach Λ John Vervaeke: Mind, Id…

- Podcast w/ Donald Hoffman and Joscha on TOE: • Donald Hoffman Λ Joscha Bach: Conscio…

- Mindfest Playlist on TOE (Ai and Consciousness): • Mindfest (Ai \& Consciousness Conference)

TIMESTAMPS:

- 00:00:00 Introduction.

- 00:02:23 Computation vs Awareness.

- 00:06:11 The paradox of language and self-contradiction.

- 00:10:05 The metaphysical categories of Charles Peirce.

- 00:13:00 Zen Buddhism’s category of zero.

- 00:14:18 Carl Jung’s interpretation of four.

- 00:21:22 Language as \.

Are you ready for artificial intelligence?

Curt’s “String Theory Iceberg”: https://youtu.be/X4PdPnQuwjYMain episode with Bach and Goertzel (October 2023): https://youtu.be/xw7omaQ8SgA?list=PLZ7ikzmc6zlN6E8KrxcYCWQIHg2tfkqvR Consider signing up for TOEmail at https://www.curtjaimungal.org

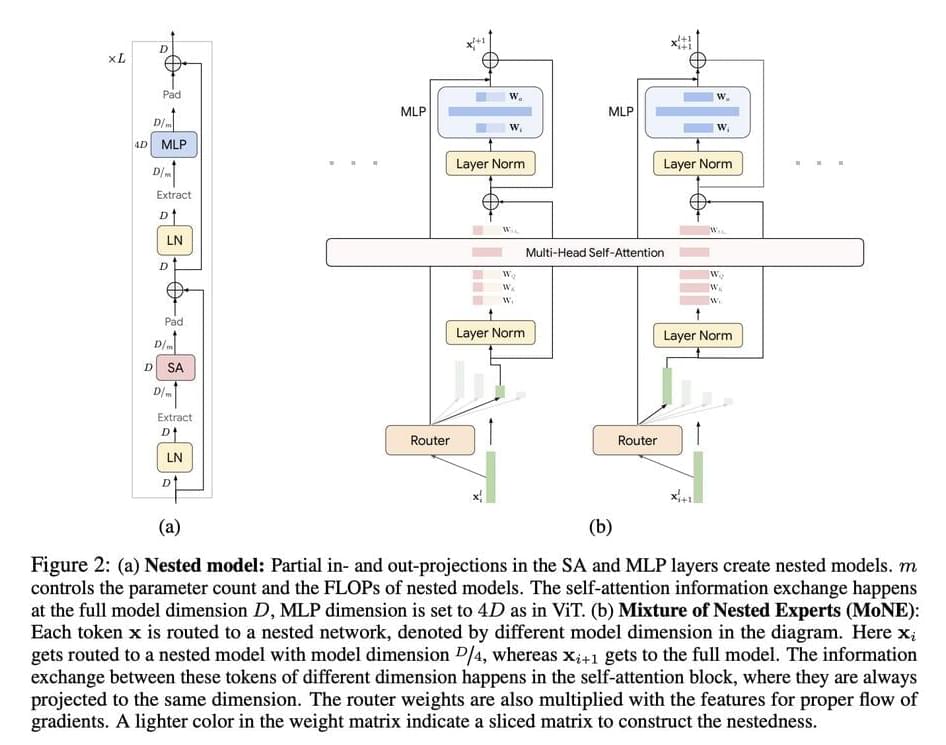

One of the significant challenges in AI research is the computational inefficiency in processing visual tokens in Vision Transformer (ViT) and Video Vision Transformer (ViViT) models. These models process all tokens with equal emphasis, overlooking the inherent redundancy in visual data, which results in high computational costs. Addressing this challenge is crucial for the deployment of AI models in real-world applications where computational resources are limited and real-time processing is essential.

Current methods like ViTs and Mixture of Experts (MoEs) models have been effective in processing large-scale visual data but come with significant limitations. ViTs treat all tokens equally, leading to unnecessary computations. MoEs improve scalability by conditionally activating parts of the network, thus maintaining inference-time costs. However, they introduce a larger parameter footprint and do not reduce computational costs without skipping tokens entirely. Additionally, these models often use experts with uniform computational capacities, limiting their ability to dynamically allocate resources based on token importance.

Here they come… 🦾🤖

And this shows one of the many ways in which the Economic Singularity is rushing at us. The 🦾🤖 Bots are coming soon to a job near you.

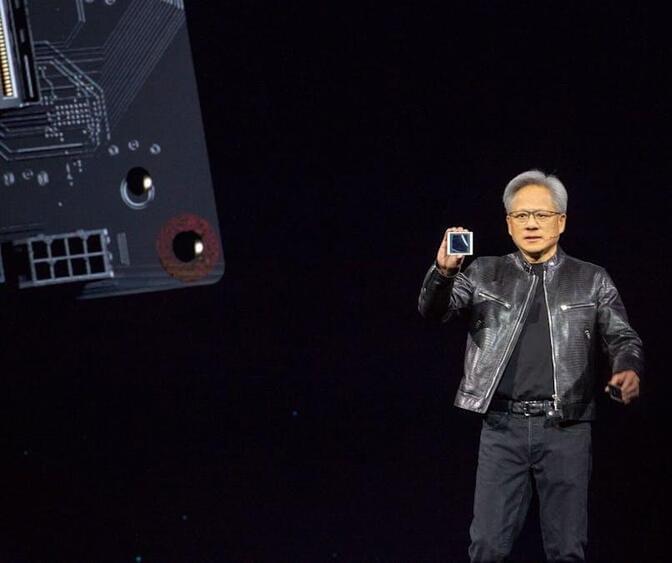

NVIDIA unveiled a suite of services, models, and computing platforms designed to accelerate the development of humanoid robots globally. Key highlights include:

- NVIDIA NIM™ Microservices: These containers, powered by NVIDIA inference software, streamline simulation workflows and reduce deployment times. New AI microservices, MimicGen and Robocasa, enhance generative physical AI in Isaac Sim™, built on @NVIDIAOmniverse

- NVIDIA OSMO Orchestration Service: A cloud-native service that simplifies and scales robotics development workflows, cutting cycle times from months to under a week.

- AI-Enabled Teleoperation Workflow: Demonstrated at #SIGGRAPH2024, this workflow generates synthetic motion and perception data from minimal human demonstrations, saving time and costs in training humanoid robots.

NVIDIA’s comprehensive approach includes building three computers to empower the world’s leading robot manufacturers: NVIDIA AI and DGX to train foundation models, Omniverse to simulate and enhance AIs in a physically-based virtual environment, and Jetson Thor, a robot supercomputer. The introduction of NVIDIA NIM microservices for robot simulation generative AI further accelerates humanoid robot development.

Nvidia’s upcoming artificial intelligence chips will be delayed by three months or more due to design flaws, a snafu that could affect customers such as Meta Platforms, Google and Microsoft that have collectively ordered tens of billions of dollars worth of the chips, according to two people who help produce the chip and server hardware for it.

Nvidia this week told Microsoft, one of its biggest customers, and another large cloud provider about a delay involving the most advanced AI chip in its new Blackwell series of chips, according to a Microsoft employee and another person with direct knowledge.