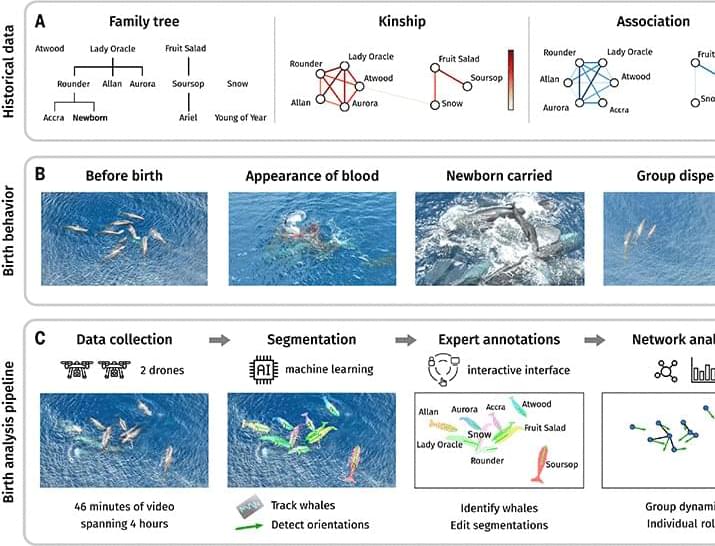

In an unprecedented observation, researchers in Science captured the birth of a sperm whale calf, documenting how 11 whales from two normally separate family groups coordinated closely to support the newborn for hours after its arrival.

These findings offer quantitative evidence of direct communal caregiving in cetaceans and suggest that short-term, highly coordinated cooperation during critical moments like birth may play a foundational role in maintaining the complex social structures seen in sperm whale societies.

Birth and neonatal care represent particularly revealing contexts for understanding the emergence of cooperation. Cetacean species produce a small number of offspring with long lifespans. Calves are born infrequently and represent a major maternal investment; calf survival depends heavily on immediate support after birth and early caregiving (9). Thus, births offer critical opportunities to study how individuals coordinate in high-stakes contexts. Direct quantitative observations of sperm whale births remain virtually absent (14), with only four sperm whale births being reported over the past 60 years, and all of them either anecdotal or whaling related (15–18).

Within the matrilineal social units of sperm whales, individuals take turns socializing, foraging, and caring for calves across years (19–24). Through decades of observational work (19, 21, 22, 25–28), communal allocare for calves has been identified as the central mechanism driving selection for sociality in this species. Although it has been hypothesized that communal defense and shared parental care underpin the evolution of sperm whale sociality (19, 22, 23, 26), these hypotheses have lacked direct empirical grounding during the birth of a newborn. Newborns are assumed to be negatively buoyant (20, 29) and likely require immediate physical support to breathe, and this potentially shapes the evolutionary importance of cooperative allocare within units (26, 30). Under this framework, the survival of mothers and newborns around birth creates a potentially dangerous environment in which selection is strongly imposed.

Here, we present a high-resolution, multiscale analysis of a sperm whale birth event through the integration of drone-based videography, machine learning, and longitudinal association and kinship data. We quantified how individuals across two distinct matrilines coordinated around the mother and newborn by analyzing and tracking physical support, proximity, orientation, and role distribution over time. Our results suggest that kin and non-kin engaged in sustained, cooperative, postnatal care, taking turns to support the newborn and maintain group cohesion, in contrast to historical kin-segregated foraging patterns (21). These findings provide rare quantitative evidence of direct allocare in cetaceans and can lend support to the hypothesis that transient, structured cooperation during birth is a key mechanism sustaining complex sociality in sperm whales.