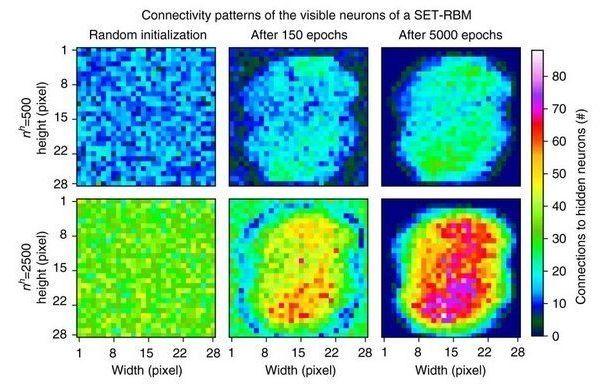

Lattice QCD is not only teaching us how the strong interactions lead to the overwhelming majority of the mass of normal matter in our Universe, but holds the potential to teach us about all sorts of other phenomena, from nuclear reactions to dark matter.

Later today, November 7th, physics professor Phiala Shanahan will be delivering a public lecture from Perimeter Institute, and we&s;ll be live-blogging it right here at 7 PM ET / 4 PM PT. You can watch the talk right here, and follow along with my commentary below. Shanahan is an expert in theoretical nuclear and particle physics and specializes in supercomputer work involving QCD, and I&s;m so curious what else she has to say.