Enjoy the videos and music you love, upload original content, and share it all with friends, family, and the world on YouTube.

Get the latest international news and world events from around the world.

CAR T moves beyond cancer, targeting autoimmune disease with immune system reset

At age 49, Jan Janisch-Hanzlik’s multiple sclerosis was destroying her freedom to live the life she wanted. She gave up her active nursing job for a desk role. Frequent falls made her afraid to carry her grandchildren. She had to move to a bigger house to make room for the wheelchair she feared she might end up needing full-time.

Even the best available medication wasn’t improving Janisch-Hanzlik’s symptoms, and she worried they’d only get worse. So when she learned about a trial of CAR T cell therapy at the University of Nebraska Medical Center in Omaha, close to the city of Blair where she lives, she phoned the clinic every other month until they were ready to enroll her as the first patient.

Originally designed to target and wipe out cancer by reprogramming the patient’s immune cells, CAR T is now being offered to patients in hundreds of clinical trials for autoimmune conditions like multiple sclerosis, lupus, Graves’ disease, vasculitis and many others. The hope is that CAR T can duplicate the success it has demonstrated in a range of blood cancers by hunting down and eliminating cells that target the self in autoimmune diseases. This would essentially reset the body’s defenses to a state like the one that existed before the disease took hold.

The Transmachinist Far Future

An exploration of the possibility of machines wishing to become human and biological in the far future.

My Patreon Page:

/ johnmichaelgodier.

My Event Horizon Channel:

/ eventhorizonshow.

Music:

AI Agent Benchmark for Real-World Professional Workflows

To solve this “utility problem,” researchers have introduced a rigorous new testing ground called Agents’ Last Exam (ALE). The name carries a dual meaning: it acts as a final graduation exam to prove an AI agent is actually ready for corporate deployment, and it represents the absolute frontier of what today’s technology can handle.

The creators of ALE don’t intend for it to be a static, one-time leaderboard. Designed as a “living benchmark,” its pool of tests will continuously grow as new industries and workflows evolve. Ultimately, the goal of Agents’ Last Exam is to shift the AI industry’s focus away from winning abstract academic trophies and toward creating digital assistants capable of driving genuine, measurable economic growth.

Challenge and measure AI agents on economically valuable and real-world tasks.

Agents’ Last Exam is building the largest-scale, broadest-coverage agent evaluation benchmark to date, measuring performance on long-horizon, economically valuable tasks with verifiable outcomes. Led by Berkeley RDI and 300+ industry experts, it now spans all 55 targeted sub-industries covering most major fields of professional work performed on a computer, with 1,500+ tasks collected toward a 5,000-task target, keeping scores objective, comparable, and meaningful across domains.

AI is incapable of telling the truth

We worry that AI will spread misinformation, but the real problem runs deeper: AI is incapable of telling the truth at all. Philosophers Bun-Sun Kim and Hongjoon Jo draw on Foucault and Heidegger to argue that humans speak truthfully because our finite, mortal existence is at stake in every word we say. AI, lacking a body, anxiety, or a conscience, risks nothing — it just recombines the internet’s idle talk into statistically plausible text, with no self to reveal. Outsourcing our communication to AI doesn’t just degrade information; it traps us in an endless loop of crowd-sourced mimicry, and threatens our capacity for genuine thought.

ChatGPT can answer complex questions and even seem to hold conversations. But can it tell the truth?

In an era where AI can answer virtually any human question, we must examine whether AI language can truly contain truth. Since the Dartmouth Conference of 1956, we’ve witnessed dramatic technological evolution—from the AI Winter of the 1970s and 80s to today’s sophisticated language models like ChatGPT that generate remarkably human-like text. As we increasingly delegate communication to artificial, rather than human, entities, a fundamental question emerges: Can AI’s artificial language capture the essence of truth conveyed by human discourse?

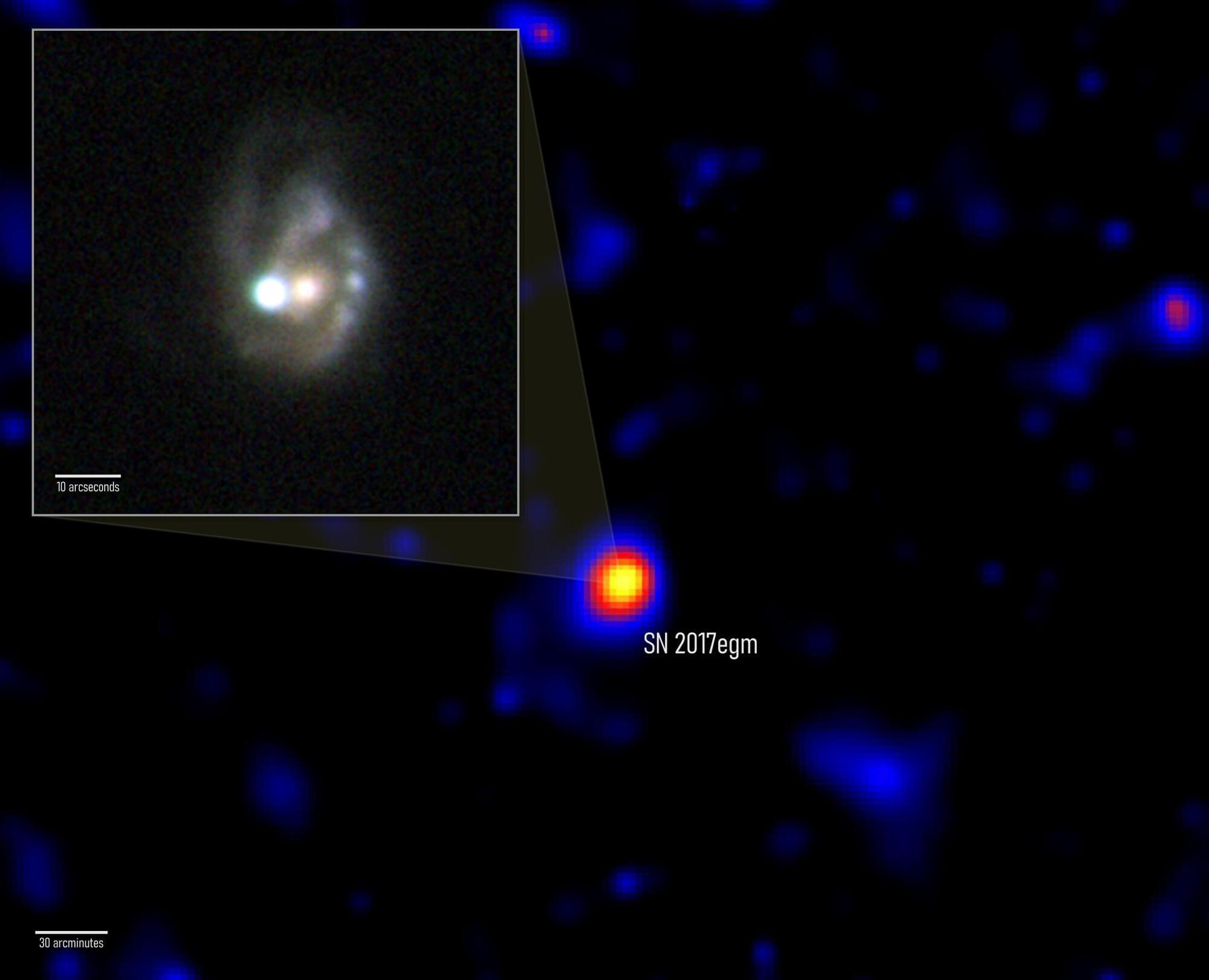

NASA’s Fermi telescope reveals the power source behind monster supernovae

NASA’s Fermi telescope has detected what may be the first confirmed gamma-ray signal from a superluminous supernova — one of the most extreme explosions in the universe. Scientists believe the blast was powered by a rapidly spinning magnetar, an exotic neutron star with unbelievably strong magnetic fields. The event, called SN 2017egm, erupted 440 million light-years away and may help explain why some supernovae become extraordinarily bright.

NASA’s Fermi Gamma-ray Space Telescope may have finally uncovered what powers some of the brightest stellar explosions ever observed. After studying years of data, an international research team found strong evidence that a rare superluminous supernova was energized by an extremely magnetic neutron star formed during the star’s collapse.

The Fermi mission is part of NASA’s network of observatories designed to track changing events across the universe and help scientists better understand how cosmic phenomena work.

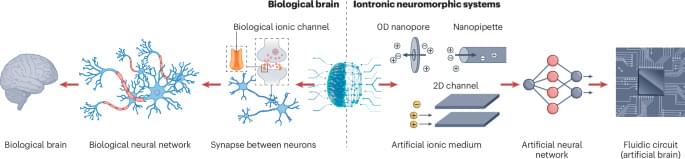

Nanofluidic ionic memory for next-generation computing

In the brain, memory involves release of neurotransmitters and transport of ions through nanoconfined channels. This Perspective discusses how nanofluidic memristors emulate this confined ion transport, highlighting the materials, design strategies and challenges involved in developing brain-inspired computing technologies.

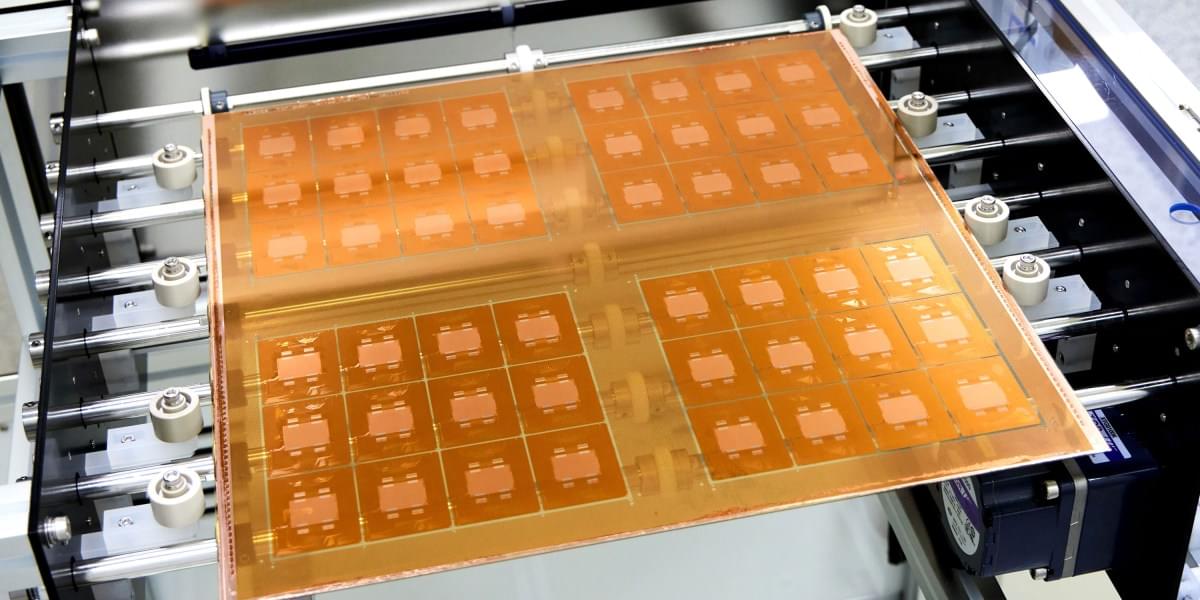

Kyocera develops breakthrough multilayer ceramic core substrate for advanced AI semiconductors

face_with_colon_three I still think that ceramics would be very useful to stop the need for global mining operations that rely heavily on rare materials when they can make the same chip from ceramics.

To be shown at ECTC 2026, May 26–29 in Orlando, USA, the new substrate technology delivers superior rigidity and circuit miniaturization for next-gen data centers, AI, and ASIC packaging.

Future AI chips could be built on glass

The idea is to use glass as the substrate, or layer, on which multiple silicon chips are connected. This form of “packaging” is an increasingly popular way to build computing hardware, because it lets engineers combine specialized chips designed for specific functions into a single system. But it presents challenges, including the fact that hardworking chips can run so hot they physically warp the substrate they’re built on. This can lead to misaligned components and may reduce how efficiently the chips can be cooled, leading to damage or premature failure.

“As AI workloads surge and package sizes expand, the industry is confronting very real mechanical constraints that impact the trajectory of high-performance computing,” says Deepak Kulkarni, a senior fellow at the chip design company Advanced Micro Devices (AMD). “One of the most fundamental is warpage.”

That’s where glass comes in. It can handle the added heat better than existing substrates, and it will let engineers keep shrinking chip packages—which will make them faster and more energy efficient. It “unlocks the ability to keep scaling package footprints without hitting a mechanical wall,” says Kulkarni.