Gravitational field equations describing binary black holes can be recast in a form resembling coupled Maxwell’s equations for electrodynamics.

Eric Ghysels made a name for himself in financial econometrics and time-series analysis. Now he translates financial models into quantum algorithms.

Economist Eric Ghysels has spent most of his career fascinated by a fundamental problem in the financial industry: figuring out how to put a price on any financial asset whose future value depends on market conditions. Ghysels, a professor at the University of North Carolina at Chapel Hill, has now set himself a new problem: studying the impact that quantum computing could have on solving asset pricing, portfolio optimization, and other computationally intensive financial problems.

He admits that nobody knows when quantum computers will have commercially viable applications, but, he says, it’s important to invest now. Physics Magazine spoke with Ghysels to learn why.

“An equation, perhaps no more than one inch long, that would allow us to, quote, ‘Read the mind of God.’”

Up next, Michio Kaku: The Universe in a Nutshell (Full Presentation) ► • Michio Kaku: The Universe in a Nutshell (F…

What if everything we know about computing is on the verge of collapsing? Physicist Michio Kaku explores the next wave that could render traditional tech obsolete: Quantum computing.

Quantum computers, Kaku argues, could unlock the secrets of life itself: and could allow us to finally advance Albert Einstein’s quest for a theory of everything.

00:00:00 Quantum computing and Michio’s book Quantum Supremacy00:01:19 Einstein’s unfinished theory.

00:03:45 String theory as the \.

It doesn’t take an expert photographer to know that the steadier the camera, the sharper the shot. But that conventional wisdom isn’t always true, according to new research led by Brown University engineers.

The researchers showed that with the help of a clever algorithm, a camera in motion can produce higher-resolution images than a camera held completely still. The new image processing technique could enable gigapixel-quality images from run-of-the-mill camera hardware, as well as sharper imaging for scientific or archival photography.

“We all know that when you shake a camera, you get a blurry picture,” said Pedro Felzenszwalb, a professor of engineering and computer science at Brown. “But what we show is that an image captured by a moving camera actually contains additional information that we can use to increase image resolution.”

Quantum computers promise enormous computational power, but the nature of quantum states makes computation and data inherently “noisy.” Rice University computer scientists have developed algorithms that account for noise that is not just random but malicious. Their work could help make quantum computers more accurate and dependable.

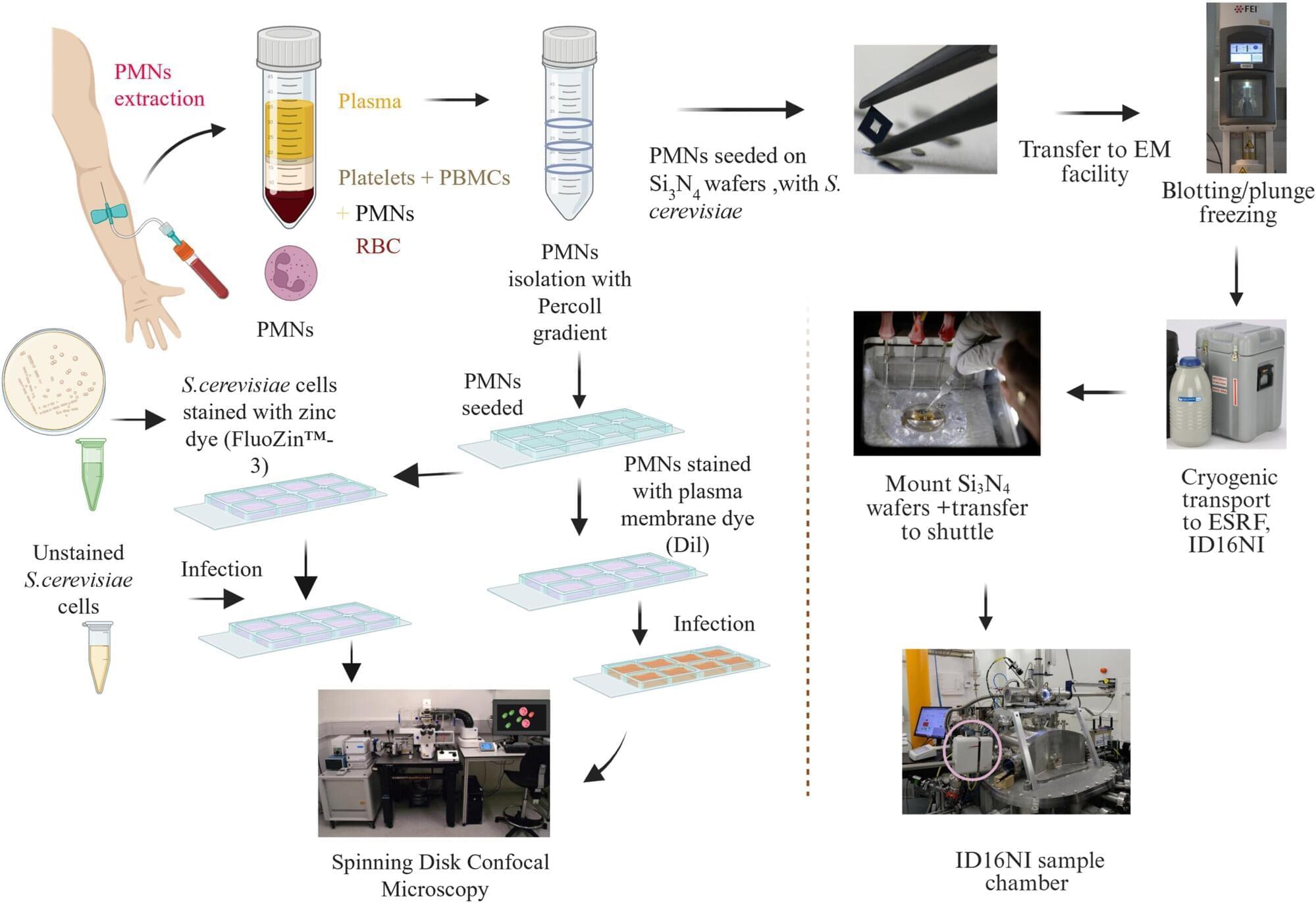

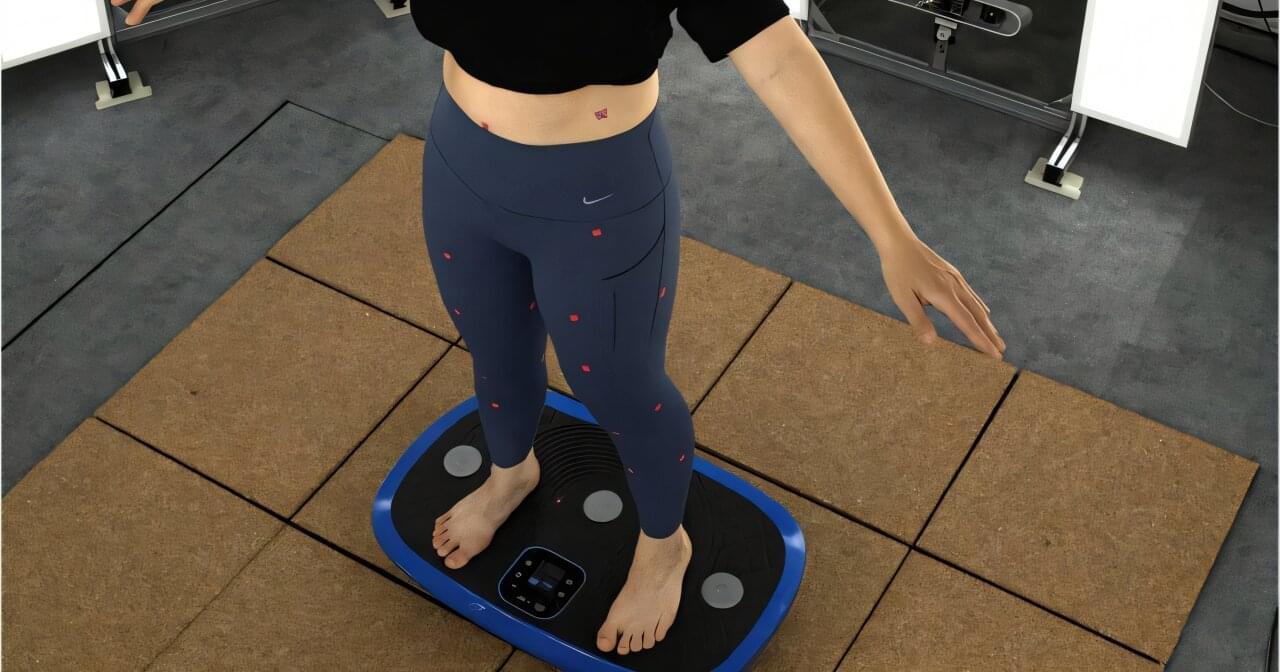

Soft tissue deformation during body movement has long posed a challenge to achieving optimal garment fit and comfort, particularly in sportswear and functional medical wear.

Researchers at The Hong Kong Polytechnic University (PolyU) have developed a novel anthropometric method that delivers highly accurate measurements to enhance the performance and design of compression-based apparel.

Prof. Joanne YIP, Associate Dean and Professor of the School of Fashion and Textiles at PolyU, and her research team pioneered this anthropometric method using image recognition algorithms to systematically access tissue deformation while minimizing motion-related errors.

The Faiss library is an open source library, developed by Meta FAIR, for efficient vector search and clustering of dense vectors. Faiss pioneered vector search on GPUs, as well as the ability to seamlessly switch between GPUs and CPUs. It has made a lasting impact in both research and industry, being used as an integrated library in several databases (e.g., Milvus and OpenSearch), machine learning libraries, data processing libraries, and AI workflows. Faiss is also used heavily by researchers and data scientists as a standalone library, often paired with PyTorch.

Collaboration with NVIDIA

Three years ago, Meta and NVIDIA worked together to enhance the capabilities of vector search technology and to accelerate vector search on GPUs. Previously, in 2016, Meta had incorporated high performing vector search algorithms made for NVIDIA GPUs: GpuIndexFlat ; GpuIndexIVFFlat ; GpuIndexIVFPQ. After the partnership, NVIDIA rapidly contributed GpuIndexCagra, a state-of-the art graph-based index designed specifically for GPUs. In its latest release, Faiss 1.10.0 officially includes these algorithms from the NVIDIA cuVS library.

Srinivasa Ramanujan Aiyangar [ a ] FRS (22 December 1887 – 26 April 1920) was an Indian mathematician. He is widely regarded as one of the greatest mathematicians of all time, despite having almost no formal training in pure mathematics. He made substantial contributions to mathematical analysis, number theory, infinite series, and continued fractions, including solutions to mathematical problems then considered unsolvable.

Ramanujan initially developed his own mathematical research in isolation. According to Hans Eysenck, “he tried to interest the leading professional mathematicians in his work, but failed for the most part. What he had to show them was too novel, too unfamiliar, and additionally presented in unusual ways; they could not be bothered”. [ 4 ] Seeking mathematicians who could better understand his work, in 1913 he began a mail correspondence with the English mathematician G. H. Hardy at the University of Cambridge, England. Recognising Ramanujan’s work as extraordinary, Hardy arranged for him to travel to Cambridge. In his notes, Hardy commented that Ramanujan had produced groundbreaking new theorems, including some that “defeated me completely; I had never seen anything in the least like them before”, [ 5 ] and some recently proven but highly advanced results.

During his short life, Ramanujan independently compiled nearly 3,900 results (mostly identities and equations). [ 6 ] Many were completely novel; his original and highly unconventional results, such as the Ramanujan prime, the Ramanujan theta function, partition formulae and mock theta functions, have opened entire new areas of work and inspired further research. [ 7 ] Of his thousands of results, most have been proven correct. [ 8 ] The Ramanujan Journal, a scientific journal, was established to publish work in all areas of mathematics influenced by Ramanujan, [ 9 ] and his notebooks—containing summaries of his published and unpublished results—have been analysed and studied for decades since his death as a source of new mathematical ideas.

The rapid advancement of artificial intelligence (AI) and machine learning systems has increased the demand for new hardware components that could speed up data analysis while consuming less power. As machine learning algorithms draw inspiration from biological neural networks, some engineers have been working on hardware that also mimics the architecture and functioning of the human brain.

An equation, perhaps no more than one inch long, that would allow us to, quote, ‘Read the mind of God.’