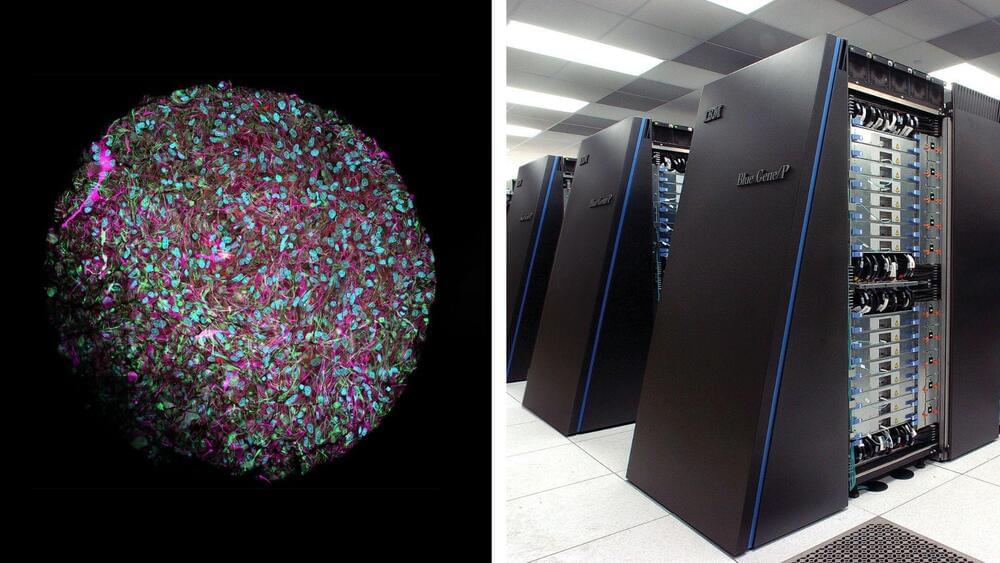

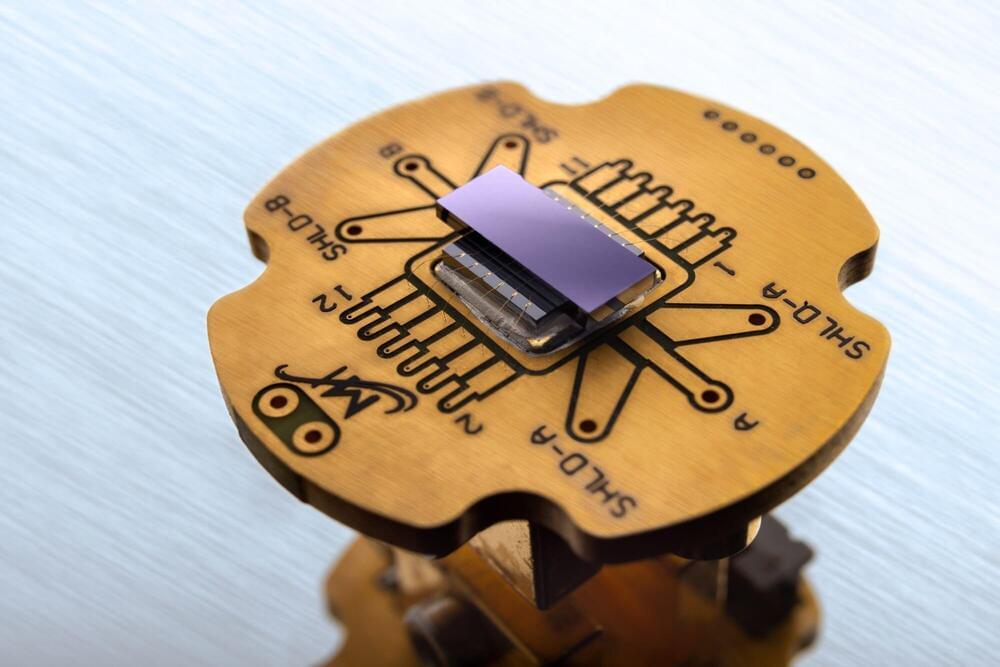

Physicists have created a novel type of analog quantum computer capable of addressing challenging physics problems that the most powerful digital supercomputers cannot solve.

A groundbreaking study published in Nature Physics.

As the name implies, Nature Physics is a peer-reviewed, scientific journal covering physics and is published by Nature Research. It was first published in October 2005 and its monthly coverage includes articles, letters, reviews, research highlights, news and views, commentaries, book reviews, and correspondence.