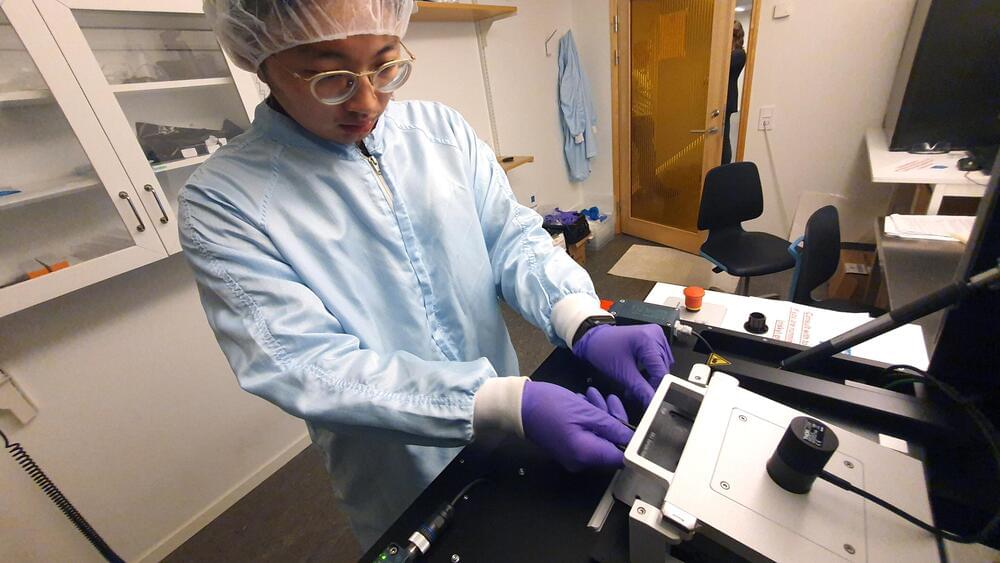

Interestingly enough, although Elon Musk’s Neuralink received a great deal of media attention, early in 2023, Synchron published results from its first-in-human study of four patients with severe paralysis who received its first-generation Stentrode neuroprosthesis implant. The implant allowed participants to create digital switches that controlled daily tasks like sending texts and emails, partaking in online banking, and communicating care needs. The study’s findings were published in a paper in JAMA Neurology in January 2023. Then, before September, the first six US patients had the Synchron BCI implanted. The study’s findings are expected by late 2024.

Let’s return to Upgrade. “One part The Six Million Dollar Man, one part Death Wish revenge fantasy” was how critics described the movie. While Death Wish is a 1974 American vigilante action-thriller movie that is partially based on Brian Garfield’s 1972 novel of the same name, the American sci-fi television series The Six Million Dollar Man from the 1970s, based on Martin Caidin’s 1972 novel Cyborg, could be considered a landmark in the context of human-AI symbiosis, although in fantasy’s domain. Oscar Goldman’s opening line in The Six Million Dollar Man was, “Gentlemen, we can rebuild him. We have the technology. We have the capability to make the world’s first bionic man… Better than he was before. Better—stronger—faster.” The term “cyborg” is a portmanteau of the words “cybernetic” and “organism,” which was coined in 1960 by two scientists, Manfred Clynes and Nathan S Kline.

At the moment, “cyborg” doesn’t seem to be a narrative of a distant future, though. Rather, it’s very much a story of today. We are just inches away from becoming cyborgs, perhaps, thanks to the brain chip implants, although Elon Musk perceives that “we are already a cyborg to some degree,” and he may be right. Cyborgs, however, pose a threat, while the dystopian idea of being ruled by Big Brother also haunts. Around the world, chip implants have already sparked heated discussions on a variety of topics, including privacy, the law, technology, medicine, security, politics, and religion. USA Today published a piece headlined “You will get chipped—eventually” as early as August 2017. And an article published in The Atlantic in September 2018 discussed how (not only brain chips but) microchip implants, in general, are evolving from a tech-geek curiosity to a legitimate health utility and that there may not be as many reasons to say “no.” But numerous concerns about privacy and cybersecurity would keep us haunted. It would be extremely difficult for policymakers to formulate laws pertaining to such sensitive yet quickly developing technology.